Introduction

Description

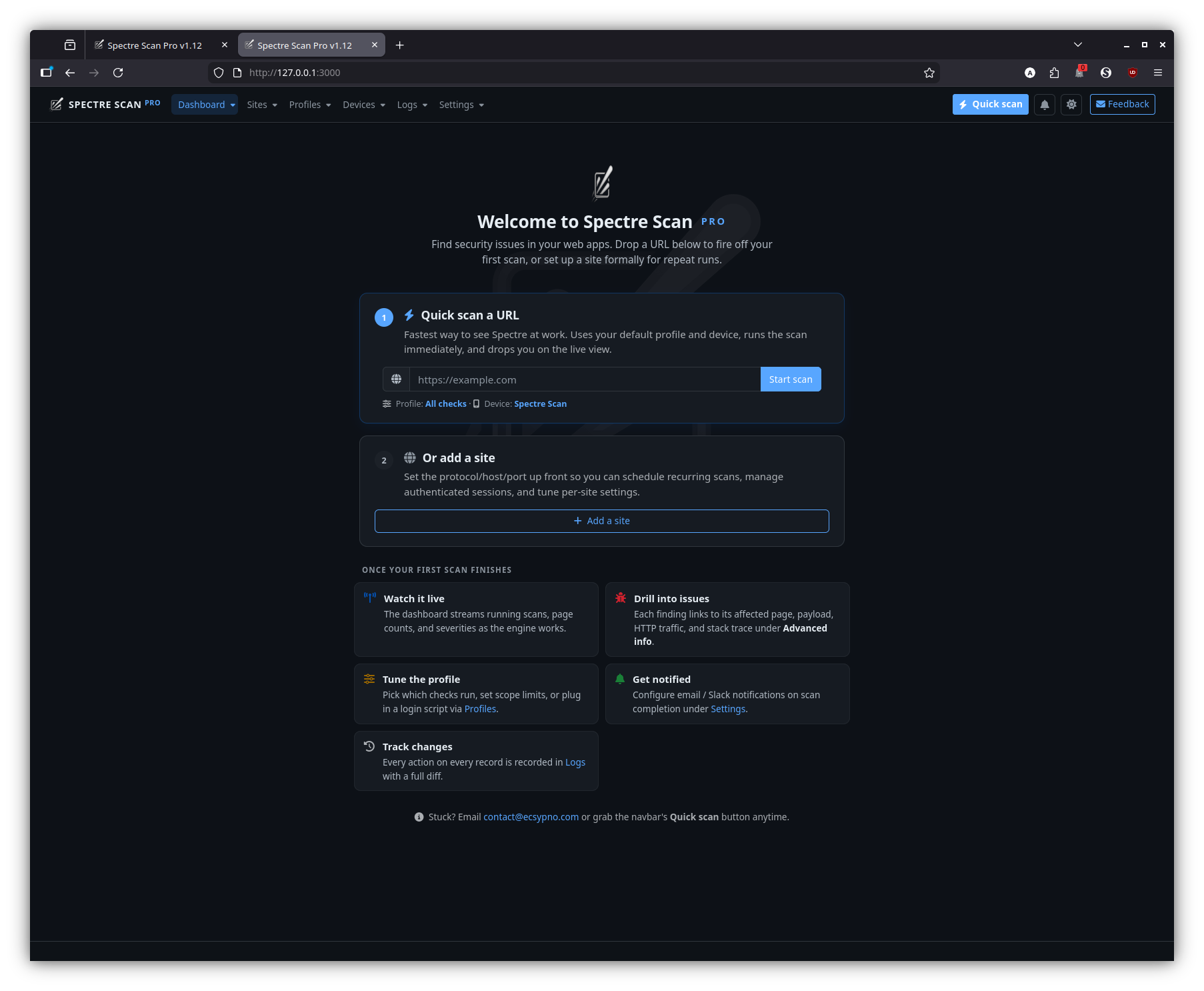

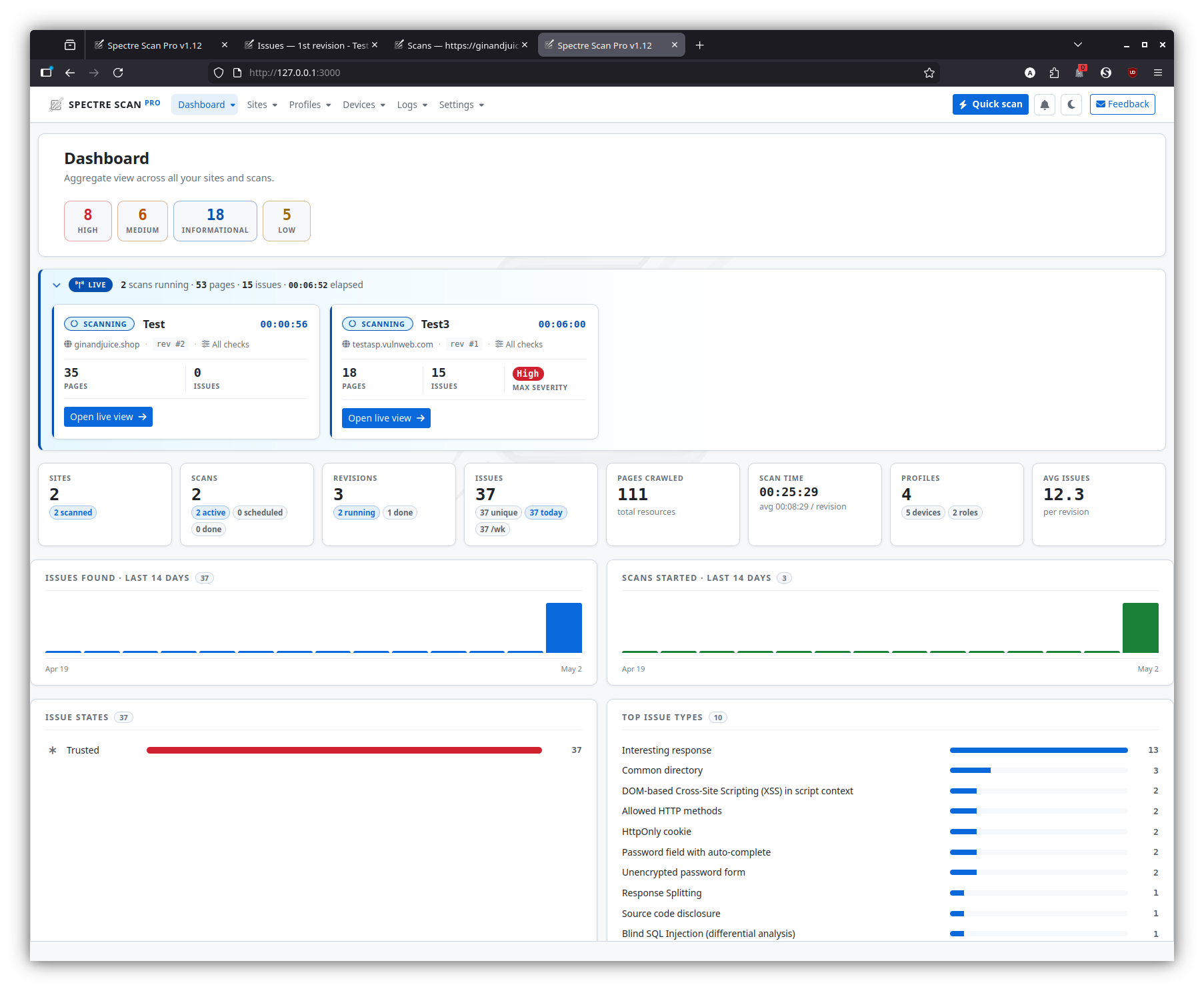

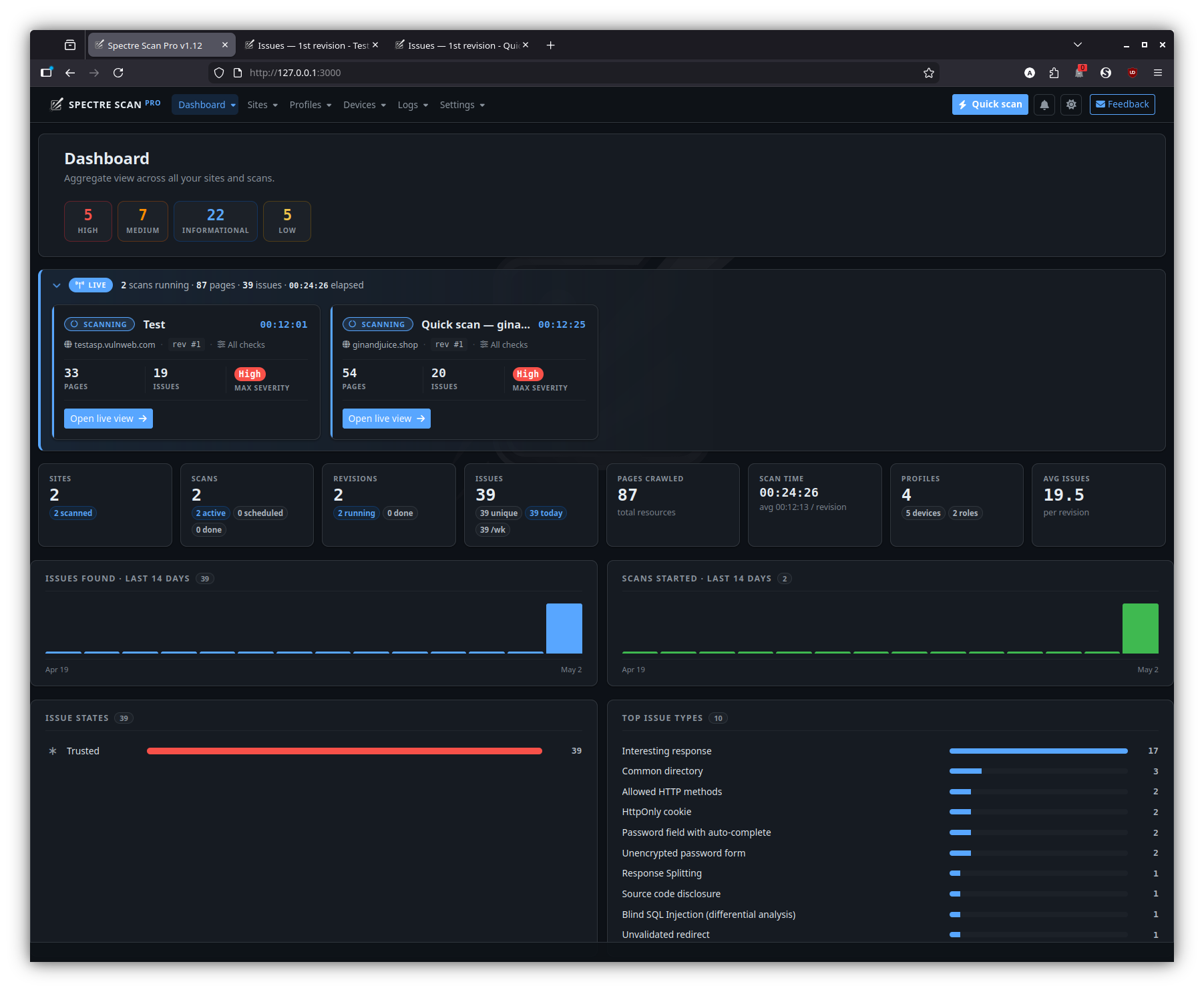

Spectre Scan is a modular, distributed, high-performance DAST web application security scanner framework, capable of analyzing the behavior and security of modern web applications and web APIs.

You can access Spectre Scan via multiple interfaces, such as:

Back-end support

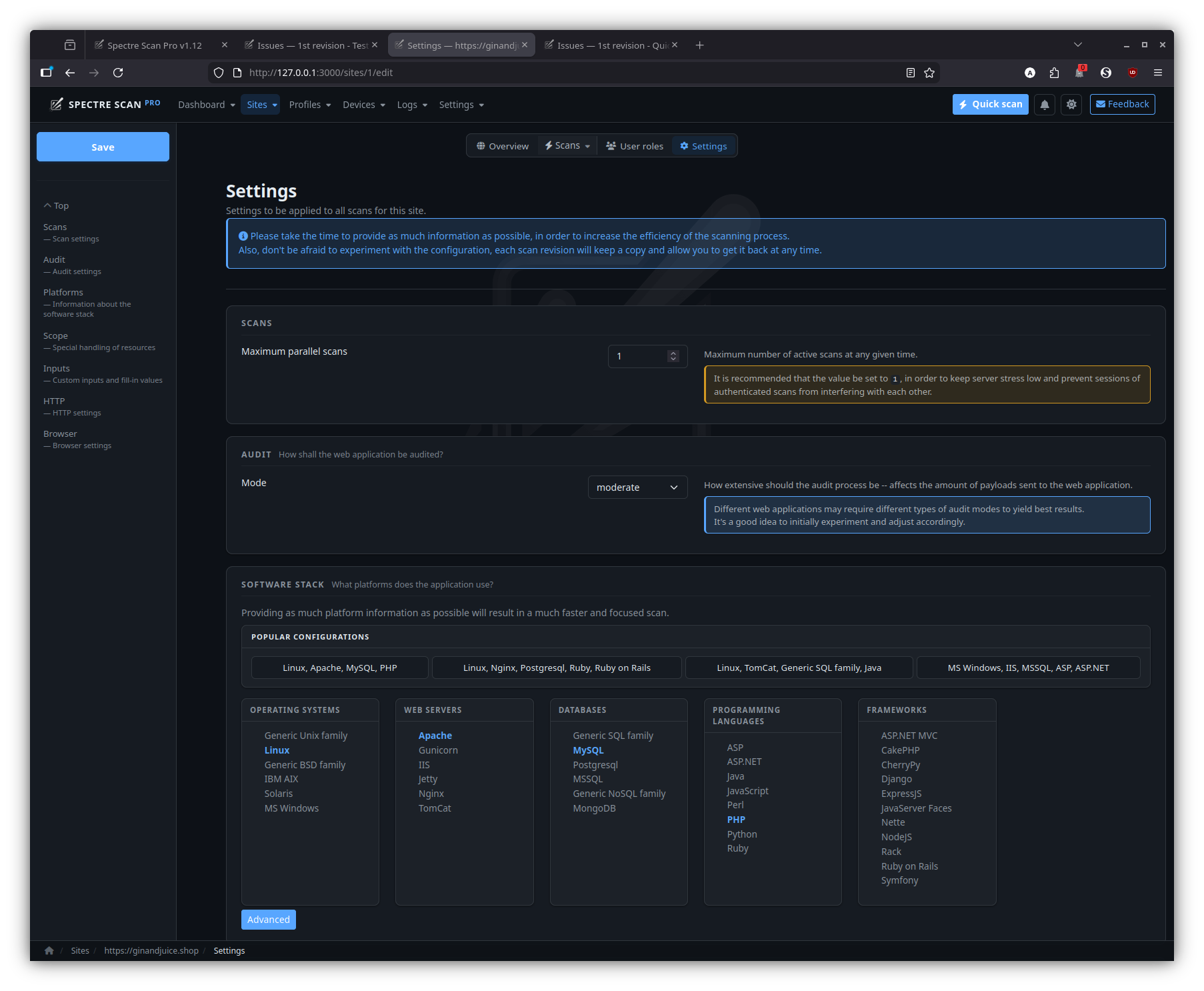

A wide range of back-end technologies is supported, including:

- Operating systems

- BSD

- Linux

- Unix

- Windows

- Solaris

- Databases

- SQL

- MySQL

- PostreSQL

- MSSQL

- Oracle

- SQLite

- Ingres

- EMC

- DB2

- Interbase

- Informix

- Firebird

- MaxDB

- Sybase

- Frontbase

- HSQLDB

- Access

- NoSQL

- MongoDB

- SQL

- Web servers

- Apache

- IIS

- Nginx

- Tomcat

- Jetty

- Gunicorn

- Programming languages

- PHP

- ASP

- ASPX

- Java

- Python

- Ruby

- Javascript

- Frameworks

- Rack

- CakePHP

- Rails

- Django

- ASP.NET MVC

- JSF

- CherryPy

- Nette

- Symfony

- NodeJS

- Express

This list keeps growing but new platforms or failure to fingerprint supported ones don’t disable the Spectre Scan engine, they merely force it to be more extensive in its scan.

Upon successful identification or configuration of platform types, the scan will be much more focused, less resource intensive and require less time to complete.

Front-end support

HTML5, modern Javascript APIs and modern DOM APIs are supported by basing their execution and analysis on Google Chromium.

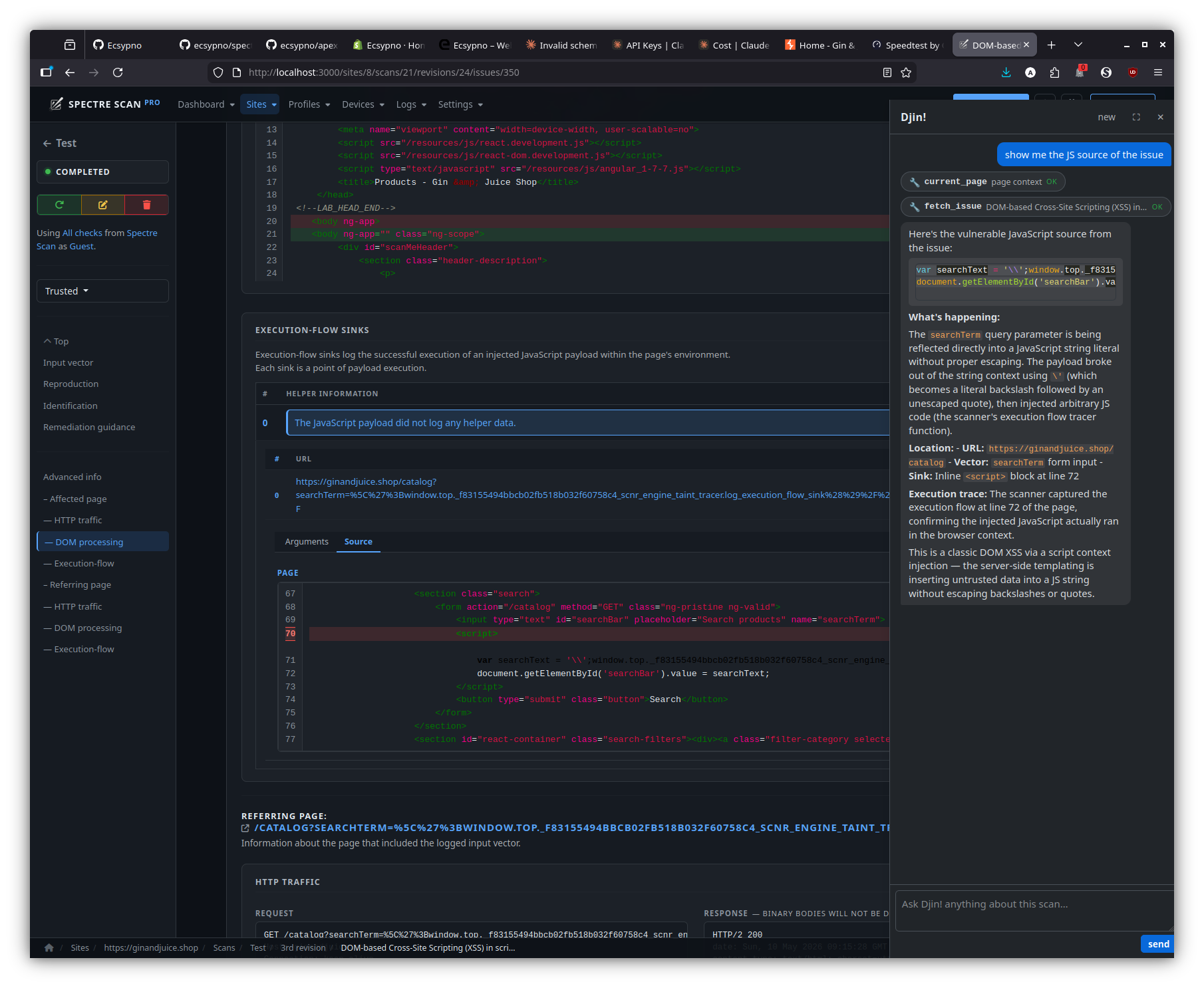

Spectre Scan injects a custom environment to monitor JS objects and APIs in order to trace execution and data flows and thus provide highly in-depth reporting as to how a client-side security issue was identified which also greatly assists in its remediation.

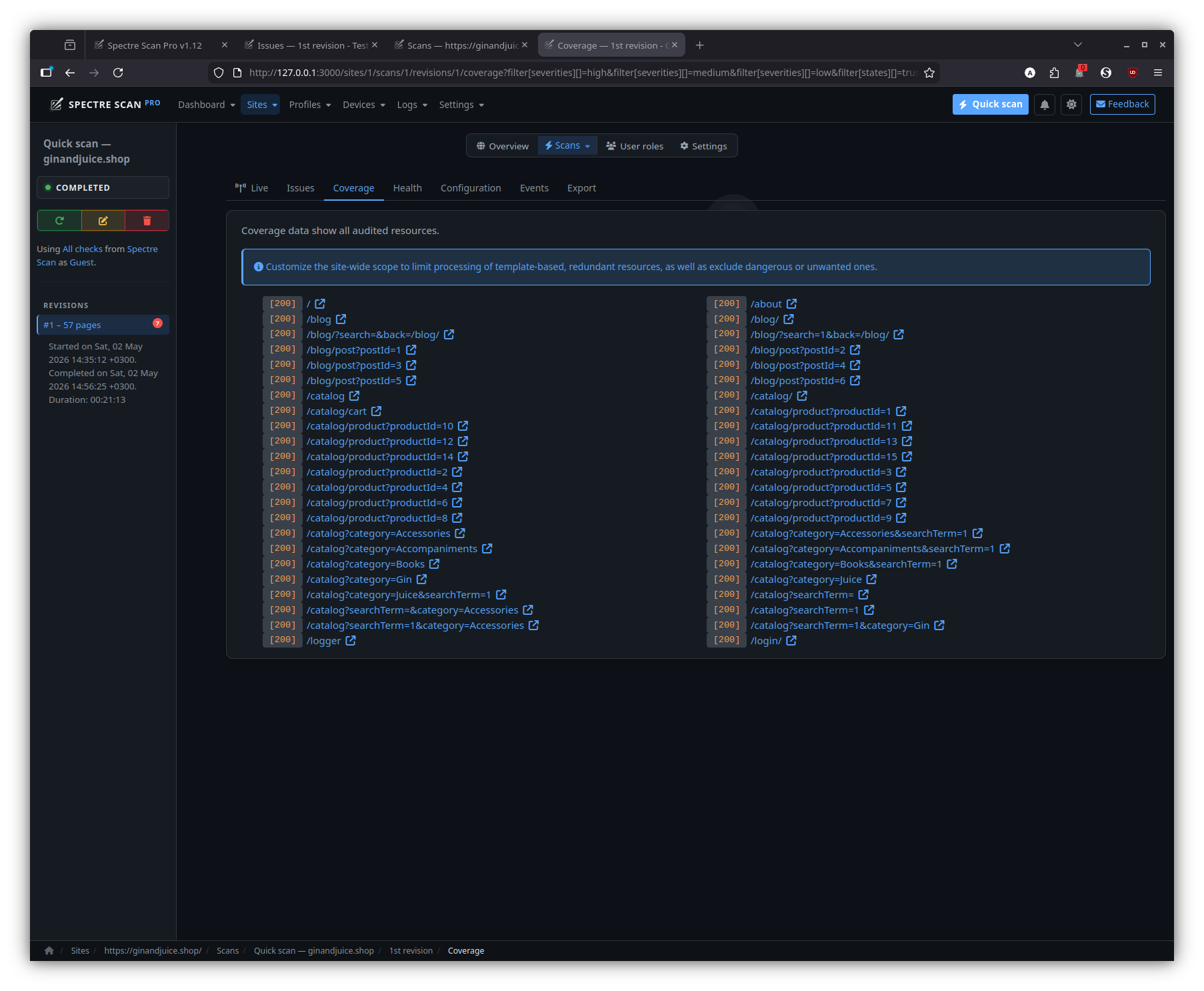

Incremental scans

Save valuable time by re-scanning only what has changed, rather than running full scans every single time.

In order to save time on subsequent scans of the same target, Spectre Scan allows you to extract a session file from completed/aborted scans, in order to allow for incremental re-scans.

This means that only newly introduced input vectors will be audited the next time around, which saves immense amounts of time from your workflow.

For example, a seed (first) scan of a website that requires an hour to complete, can result in re-scan times of less that 10 minutes – depending on how many new input vectors were introduced.

Behavioral analysis

Spectre Scan will study the web application/service to identify how each input interacts with the front and back ends and tailor the audit for each specific input’s characteristics.

This results in highly self-optimized scans using less resources and requiring less time to complete, as well as less server stress.

Training also continues during the audit process and new inputs that may appear during that time will be incorporated into the scan in whole.

Extendability

Its modular architecture allows for easy augmentation when it comes to security checks, arbitrary custom functionality in the form of plugins and bespoke reporting.

Entities which perform tasks crucial to the operation of a web scanner have been abstracted to be components, more to be easily added by anyone in order to extend functionality.

Components are split into the following types:

- Checks – Security checks.

- Active – They actively engage the web application via its inputs.

- Passive – They passively look for objects.

- Plugins – Add arbitrary functionality to the system, accept options and run in parallel to the scan.

- Reporters – They export the scan results in several formats.

- Path extractors – They extract paths for the crawler to follow.

- Fingerprinters – They identify OS version, platforms, servers, etc.

Customization

Furthermore, scripted scans allow for the creation of basically tailor made scans by moving decision making points and configuration to user-specified methods and can extend to even creating a custom scanner for any web application backed by the Spectre Scan engine.

The API is tidy and simple and easily allows you to plug-in to key API1 scan points in order to get the best results from any scan.

Scripts are written in Ruby and can thus be stored in your favorite CVS, this enables you to work side-by-side with the web application development team and have the right script revision alongside the respective web application revision.

Scalability

No dependencies, no configuration; Spectre Scan can build a cloud of itself that allows you to scale both horizontally and vertically.

Scale up by plugging more nodes to its Grid, or down by unplugging them.

Furthermore, with multi-Instance scans you can not only distribute multiple scans across nodes, but also individual scans, for super fast scanning of large sites.

Finally, with its quick suspend-to-disk/restore feature, running scans can easily be moved from node to node, accommodating highly optimized load-balancing and cost saving policies.

Deployment

Deployment options range from command-line utilities for direct scans, scripted scans (for configuration and custom scanners) as well as distributed deployments to perform scans from remote hosts and Grid/cloud/SaaS setups.

Its simple distributed architecture2 allows for easy creation of self-healing, load-balanced (vertically and horizontally) scanner grids; basically allowing for the creation of private scanner clouds in either yours or a Cloud provider’s infrastructure.

Conclusion

Thus, Spectre Scan can in essence fit into any SDLC with great grace, ease and little care.

Installation

For installation instructions please refer to the installer.

System requirements

| Operating System | Architecture | RAM | Disk | CPU |

|---|---|---|---|---|

| Linux | x86 64bit | 2GB | 4GB | Multicore |

Resource constrained environments

To optimize the resources a scan may use please consult:

In addition, Agents and other servers can have their max-slots adjusted

to a user-specified value, instead of the default, which is auto and based

on the aforementioned system requirements.

Please issue the -h flag to see available options for each executable in order

to examine the applicable overrides.

Direct

The easiest approach is a direct scan using the spectre CLI executable.

To see all available options run:

bin/spectre -h

Example

The following command will run a scan with default settings against http://testhmtml5.vulnweb.com.

bin/spectre http://testhmtml5.vulnweb.com

Scripted

Scripted scans allow you to configure the system and take over decision making points for a much more fine-grained scan. Aside from that, scripts also allow you to quickly add custom components on the fly.

Scan scripts can either be a form of configuration or standalone scanners.

Examples

As configuration

With helpers

html5.config.rb:

SCNR::Engine::API.run do

require '/home/user/script/helpers'

Dom {

on :event, &method(:on_event_handler)

}

Checks {

# This will run from the context of SCNR::Engine::Check::Base; it

# basically creates a new check component on the fly.

as :not_found, check_404_info, method(:check_404)

}

Plugins {

# This will run from the context of SCNR::Engine::Plugin::Base; it

# basically creates a new plugin component on the fly.

as :my_plugin, my_plugin_info, method(:my_plugin)

}

Scan {

Session {

to :login, &method(:login)

to :check, &method(:login_check)

}

Scope {

# Don't visit resources that will end the session.

reject :url, &method(:to_logout)

}

}

end

helpers.rb:

# Allow some time for the modal animation to complete in order for

# the login form to appear.

#

# (Not actually necessary, this is just an example on how to hande quirks.)

def on_event_handler( result, locator, event, options, browser )

return if locator.attributes['href'] != '#myModal' || event != :click

sleep 1

end

# Does something really simple, logs an issue for each 404 page.

def check_404

response = page.response

return if response.code != 404

log(

proof: response.status_line,

vector: SCNR::Engine::Element::Server.new( response.url ),

response: response

)

end

def check_404_info

{

issue: {

name: 'Page not found',

severity: SCNR::Engine::Issue::Severity::INFORMATIONAL

}

}

end

def my_plugin

# Do stuff then wait until scan completes.

wait_while_framework_running

# Do stuff after scan completes.

end

def my_plugin_info

{

name: 'My Plugin',

description: 'Just waits for the scan to finish,'

}

end

def login( browser )

# Login with whichever interface you prefer.

watir = browser.watir

selenium = browser.selenium

watir.goto SCNR::Engine::Options.url

watir.link( href: '#myModal' ).click

form = watir.form( id: 'loginForm' )

form.text_field( name: 'username' ).set 'admin'

form.text_field( name: 'password' ).set 'admin'

form.submit

end

def login_check( &in_async_mode )

http_client = SCNR::Engine::HTTP::Client

check = proc { |r| r.body.optimized_include? '<b>admin' }

# If an async block is passed, then the framework would rather

# schedule it to run asynchronously.

if in_async_mode

http_client.get SCNR::Engine::Options.url do |response|

in_async_mode.call check.call( response )

end

else

response = http_client.get( SCNR::Engine::Options.url, mode: :sync )

check.call( response )

end

end

def to_logout( url )

url.path.optimized_include?( 'login' ) ||

url.path.optimized_include?( 'logout' )

end

Single file

SCNR::Engine::API.run do

Dom {

# Allow some time for the modal animation to complete in order for

# the login form to appear.

#

# (Not actually necessary, this is just an example on how to hande quirks.)

on :event do |_, locator, event, *|

next if locator.attributes['href'] != '#myModal' || event != :click

sleep 1

end

}

Checks {

# This will run from the context of SCNR::Engine::Check::Base; it

# basically creates a new check component on the fly.

#

# Does something really simple, logs an issue for each 404 page.

as :not_found,

issue: {

name: 'Page not found',

severity: SCNR::Engine::Issue::Severity::INFORMATIONAL

} do

response = page.response

next if response.code != 404

log(

proof: response.status_line,

vector: SCNR::Engine::Element::Server.new( response.url ),

response: response

)

end

}

Plugins {

# This will run from the context of SCNR::Engine::Plugin::Base; it

# basically creates a new plugin component on the fly.

as :my_plugin do

# Do stuff then wait until scan completes.

wait_while_framework_running

# Do stuff after scan completes.

end

}

Scan {

Session {

to :login do |browser|

# Login with whichever interface you prefer.

watir = browser.watir

selenium = browser.selenium

watir.goto SCNR::Engine::Options.url

watir.link( href: '#myModal' ).click

form = watir.form( id: 'loginForm' )

form.text_field( name: 'username' ).set 'admin'

form.text_field( name: 'password' ).set 'admin'

form.submit

end

to :check do |async|

http_client = SCNR::Engine::HTTP::Client

check = proc { |r| r.body.optimized_include? '<b>admin' }

# If an async block is passed, then the framework would rather

# schedule it to run asynchronously.

if async

http_client.get SCNR::Engine::Options.url do |response|

success = check.call( response )

async.call success

end

else

response = http_client.get( SCNR::Engine::Options.url, mode: :sync )

check.call( response )

end

end

}

Scope {

# Don't visit resources that will end the session.

reject :url do |url|

url.path.optimized_include?( 'login' ) ||

url.path.optimized_include?( 'logout' )

end

}

}

end

Supposing the above is saved as html5.config.rb:

bin/spectre http://testhtml5.vulnweb.com --script=html5.config.rb

Standalone

This basically creates a custom scanner.

The difference is that these scripts will run a scan and handle its results on their own, and not just serve as configuration.

With helpers

When a scan script is large-ish and/or complicated it’s better to split it into the main file and helper handler methods.

bin/spectre_script scanner.rb

scanner.rb:

require 'scnr/engine/api'

require "#{Options.paths.root}/tmp/scripts/with_helpers/helpers"

SCNR::Engine::API.run do

Scan {

# Can also be written as:

#

# options.set(

# url: 'http://testhtml5.vulnweb.com',

# audit: {

# elements: [:links, :forms, :cookies, :ui_inputs, :ui_forms]

# },

# checks: ['*']

# )

Options {

set url: 'http://my-site.com',

audit: {

elements: [:links, :forms, :cookies, :ui_inputs, :ui_forms]

},

checks: ['*']

}

# Scan session configuration.

Session {

# Login using the #fill_in_and_submit_the_login_form method from the helpers.rb file.

to :login, :fill_in_and_submit_the_login_form

# Check for a valid session using the #find_welcome_message method from the helpers.rb file.

to :check, :find_welcome_message

}

# Scan scope configuration.

Scope {

# Limit the scope of the scan based on URL.

select :url, :within_the_eshop

# Limit the scope of the scan based on Element.

reject :element, :with_sensitive_action; also :with_weird_nonce

# Only select pages that are in the admin panel.

select :page, :in_admin_panel

# Limit the scope of the scan based on Page.

reject :page, :with_error

# Limit the scope of the scan based on DOM events and DOM elements.

# In this case, never click the logout button!

reject :event, :that_clicks_the_logout_button

}

# Run the scan and handle the results (in this case print to STDOUT) using #handle_results.

run! :handle_results

}

Logging {

# Error and exception handling.

on :error, :log_error

on :exception, :log_exception

}

Data {

# Don't store issues in memory, we'll send them to the DB.

issues.disable(:storage).on :new, :save_to_db

# Could also be written as:

#

# Issues {

# disable(:storage)

# on :new, :save_to_db)

# }

#

# Or:

#

# Issues { disable(:storage); on :new, :save_to_db) }

# Store every page in the DB too for later analysis.

pages.on :new, :save_to_db

# Or:

#

# Pages {

# on :new, :save_to_db

# }

}

Http {

on :request, :add_special_auth_header

on :response, :gather_traffic_data; also :increment_http_performer_count

}

Checks {

# Add a custom check on the fly to check for something simple specifically

# for this scan.

as :missing_important_header, with_missing_important_header_info,

:log_pages_with_missing_important_headers

}

# Been having trouble with this scan, collect some runtime statistics.

plugins.as :remote_debug, send_debugging_info_to_remote_server_info,

:send_debugging_info_to_remote_server

# Serves PHP scripts under the extension 'x'.

fingerprinters.as :php_x, :treat_x_as_php

Input {

# Vouchers and serial numbers need to come from an algorithm.

values :with_valid_role_id

}

Dom {

# Let's have a look inside the live JS env of those interesting pages,

# setup the data collection.

before :load, :start_js_data_gathering

after :load, :retrieve_js_data; also :event, :retrieve_event_js_data

}

end

helpers.rb:

# State

def log_error( error )

# ...

end

def log_exception( exception )

# ...

end

# Data

def save_to_db( obj )

# Do stufff...

end

def save_js_data_to_db( data, element, event )

# Do other stufff...

end

# Scope

def within_the_eshop( url )

url.path.start_with? '/eshop'

end

def with_error( page )

/Error/i.match? page.body

end

def in_admin_panel( page )

/Admin panel/i.match? page.body

end

def that_clicks_the_logout_button( event, element )

event == :click && element.tag_name == :button &&

element.attributes['id'] == 'logout'

end

def with_sensitive_action( element )

element.action.include? '/sensitive.php'

end

def with_weird_nonce( element )

element.inputs.include? 'weird_nonce'

end

# HTTP

def generate_request_header

# ...

end

def save_raw_http_response( response )

# ...

end

def save_raw_http_request( request )

# ...

end

def add_special_auth_header( request )

request.headers['Special-Auth-Header'] ||= generate_request_header

end

def increment_http_performer_count( response )

# Count the amount of requests/responses this system component has

# performed/received.

#

# Performers can be browsers, checks, plugins, session, etc.

stuff( response.request.performer.class )

end

def gather_traffic_data( response )

# Collect raw HTTP traffic data.

save_raw_http_response( response.to_s )

save_raw_http_request( response.request.to_s )

end

# Checks

def with_missing_important_header_info

{

name: 'Missing Important-Header',

description: %q{Checks pages for missing `Important-Header` headers.},

elements: [ Element::Server ],

issue: {

name: %q{Missing 'Important-Header' header},

severity: Severity::INFORMATIONAL

}

}

end

# This will run from the context of a Check::Base.

def log_pages_with_missing_important_headers

return if audited?( page.parsed_url.host ) ||

page.response.headers['Important-Header']

audited( page.parsed_url.host )

log(

vector: Element::Server.new( page.url ),

proof: page.response.headers_string

)

end

# Plugins

# This will run from the context of a Plugin::Base.

def send_debugging_info_to_remote_server

address = '192.168.0.11'

port = 81

auth = Utilities.random_seed

url = `start_remote_debug_server.sh -a #{address} -p #{port} --auth #{auth}`

url.strip!

http.post( url,

body: SCNR::Engine::SCNR::Engine::Options.to_h.to_json,

mode: :sync

)

while framework.running? && sleep( 5 )

http.post( "#{url}/statistics",

body: framework.statistics.to_json,

mode: :sync

)

end

end

def send_debugging_info_to_remote_server_info

{

name: 'Debugger'

}

end

# Fingerprinters

# This will run from the context of a Fingerprinter::Base.

def treat_x_as_php

return if extension != 'x'

platforms << :php

end

# Session

def fill_in_and_submit_the_login_form( browser )

browser.load "#{SCNR::Engine::SCNR::Engine::Options.url}/login"

form = browser.form

form.text_field( name: 'username' ).set 'john'

form.text_field( name: 'password' ).set 'doe'

form.input( name: 'submit' ).click

end

def find_welcome_message

http.get( SCNR::Engine::Options.url, mode: :sync ).body.include?( 'Welcome user!' )

end

# Inputs

def with_valid_code( name, current_value )

{

'voucher-code' => voucher_code_generator( current_value ),

'serial-number' => serial_number_generator( current_value )

}[name]

end

def with_valid_role_id( inputs )

return if !inputs.include?( 'role-type' )

inputs['role-id'] ||= (inputs['role-type'] == 'manager' ? 1 : 2)

inputs

end

# Browser

def start_js_data_gathering( page, browser )

return if !page.url.include?( 'something/interesting' )

browser.javascript.inject <<JS

// Gather JS data from listeners etc.

window.secretJSData = {};

JS

end

def retrieve_js_data( page, browser )

return if !page.url.include?( 'something/interesting' )

save_js_data_to_db(

browser.javascript.run( 'return window.secretJSData' ),

page, :load

)

end

def retrieve_event_js_data( event, element, browser )

return if !browser.url.include?( 'something/interesting' )

save_js_data_to_db(

browser.javascript.run( 'return window.secretJSData' ),

element, event

)

end

def handle_results( report, statistics )

puts

puts '=' * 80

puts

puts "[#{report.sitemap.size}] Sitemap:"

puts

report.sitemap.sort_by { |url, _| url }.each do |url, code|

puts "\t[#{code}] #{url}"

end

puts

puts '-' * 80

puts

puts "[#{report.issues.size}] Issues:"

puts

report.issues.each.with_index do |issue, idx|

s = "\t[#{idx+1}] #{issue.name} in `#{issue.vector.type}`"

if issue.vector.respond_to?( :affected_input_name ) &&

issue.vector.affected_input_name

s << " input `#{issue.vector.affected_input_name}`"

end

puts s << '.'

puts "\t\tAt `#{issue.page.dom.url}` from `#{issue.referring_page.dom.url}`."

if issue.proof

puts "\t\tProof:\n\t\t\t#{issue.proof.gsub( "\n", "\n\t\t\t" )}"

end

puts

end

puts

puts '-' * 80

puts

puts "Statistics:"

puts

puts "\t" << statistics.ai.gsub( "\n", "\n\t" )

end

Single file

require 'scnr/engine/api'

# Mute output messages from the CLI interface, we've got our own output methods.

SCNR::UI::CLI::Output.mute

SCNR::Engine::API.run do

State {

on :change do |state|

puts "State\t\t- #{state.status.capitalize}"

end

}

Data {

Issues {

on :new do |issue|

puts "Issue\t\t- #{issue.name} from `#{issue.referring_page.dom.url}`" <<

" in `#{issue.vector.type}`."

end

}

}

Logging {

on :error do |error|

$stderr.puts "Error\t\t- #{error}"

end

# Way too much noise.

# on :exception do |exception|

# ap exception

# ap exception.backtrace

# end

}

Dom {

# Allow some time for the modal animation to complete in order for

# the login form to appear.

#

# (Not actually necessary, this is just an example on how to hande quirks.)

on :event do |_, locator, event, *|

next if locator.attributes['href'] != '#myModal' || event != :click

sleep 1

end

}

Checks {

# This will run from the context of SCNR::Engine::Check::Base; it

# basically creates a new check component on the fly.

#

# Does something really simple, logs an issue for each 404 page.

as :not_found,

issue: {

name: 'Page not found',

severity: SCNR::Engine::Issue::Severity::INFORMATIONAL

} do

response = page.response

next if response.code != 404

log(

proof: response.status_line,

vector: SCNR::Engine::Element::Server.new( response.url ),

response: response

)

end

}

Plugins {

# This will run from the context of SCNR::Engine::Plugin::Base; it

# basically creates a new plugin component on the fly.

as :my_plugin do

puts "#{shortname}\t- Running..."

wait_while_framework_running

puts "#{shortname}\t- Done!"

end

}

Scan {

Options {

set url: 'http://testhtml5.vulnweb.com',

audit: {

elements: [:links, :forms, :cookies]

},

checks: ['*']

}

Session {

to :login do |browser|

print "Session\t\t- Logging in..."

# Login with whichever interface you prefer.

watir = browser.watir

selenium = browser.selenium

watir.goto SCNR::Engine::Options.url

watir.link( href: '#myModal' ).click

form = watir.form( id: 'loginForm' )

form.text_field( name: 'username' ).set 'admin'

form.text_field( name: 'password' ).set 'admin'

form.submit

if browser.response.body =~ /<b>admin/

puts 'done!'

else

puts 'failed!'

end

end

to :check do |async|

print "Session\t\t- Checking..."

http_client = SCNR::Engine::HTTP::Client

check = proc { |r| r.body.optimized_include? '<b>admin' }

# If an async block is passed, then the framework would rather

# schedule it to run asynchronously.

if async

http_client.get SCNR::Engine::Options.url do |response|

success = check.call( response )

puts "logged #{success ? 'in' : 'out'}!"

async.call success

end

else

response = http_client.get( SCNR::Engine::Options.url, mode: :sync )

success = check.call( response )

puts "logged #{success ? 'in' : 'out'}!"

success

end

end

}

Scope {

# Don't visit resources that will end the session.

reject :url do |url|

url.path.optimized_include?( 'login' ) ||

url.path.optimized_include?( 'logout' )

end

}

before :page do |page|

puts "Processing\t- [#{page.response.code}] #{page.dom.url}"

end

on :page do |page|

puts "Scanning\t- [#{page.response.code}] #{page.dom.url}"

end

after :page do |page|

puts "Scanned\t\t- [#{page.response.code}] #{page.dom.url}"

end

run! do |report, statistics|

puts

puts '=' * 80

puts

puts "[#{report.sitemap.size}] Sitemap:"

puts

report.sitemap.sort_by { |url, _| url }.each do |url, code|

puts "\t[#{code}] #{url}"

end

puts

puts '-' * 80

puts

puts "[#{report.issues.size}] Issues:"

puts

report.issues.each.with_index do |issue, idx|

s = "\t[#{idx+1}] #{issue.name} in `#{issue.vector.type}`"

if issue.vector.respond_to?( :affected_input_name ) &&

issue.vector.affected_input_name

s << " input `#{issue.vector.affected_input_name}`"

end

puts s << '.'

puts "\t\tAt `#{issue.page.dom.url}` from `#{issue.referring_page.dom.url}`."

if issue.proof

puts "\t\tProof:\n\t\t\t#{issue.proof.gsub( "\n", "\n\t\t\t" )}"

end

puts

end

puts

puts '-' * 80

puts

puts "Statistics:"

puts

puts "\t" << statistics.ai.gsub( "\n", "\n\t" )

end

}

end

Supposing the above is saved as html5.scanner.rb:

bin/spectre_script html5.scanner.rb

Distributed

Distribution features are deferred to Cuboid; hence, a quick read through its readme will outline the architecture.

In this case, the Cuboid application is Spectre Scan.

Agent

To start an Agent run the spectre_agent CLI executable.

To see all available options run:

bin/spectre_agent -h

Each Agent should run on a different machine and its main role is to provide Instances to clients; each Instance is a scanner process.

The Agent will also split the available resources of the machine on which it runs into slots, with each slot corresponding to enough space for one Instance.

(To see how many slots a machine has you can use the spectre_system_info utility.)

Example

Server

In one terminal run:

bin/spectre_agent

The default port at the time of writing is 7331, so you should see something like:

I, [2022-01-23T09:54:21.849679 #1121060] INFO -- System: RPC Server started.

I, [2022-01-23T09:54:21.849730 #1121060] INFO -- System: Listening on 127.0.0.1:7331

Client

To start a scan originating from that Agent you must issue a spawn

call in order to obtain an Instance; this can be achieved using the spectre_spawn

CLI executable.

In another terminal run:

bin/spectre_spawn --agent-url=127.0.0.1:7331 http://testhtml5.vulnweb.com

The above will run a scan with the default options against http://testhtml5.vulnweb.com, originating from the Agent node.

The spectre_spawn utility largely accepts the same options as spectre.

If the Agent is out of slots you will see the following message:

[~] Agent is at maximum utilization, please try again later.

In which case you can keep retrying until a slot opens up.

Grid

A Grid is simply a group of Agents and its setup is as simple as specifying an already running Agent as a peer to a future Agent.

The order in which you start or specify peers is irrelevant, Agents will reach convergence on their own and keep track of their connectivity status with each other.

After a Grid is configured, when a spawn call is issued to any Grid

member it will be served by any of its Agents based on the desired

distribution strategy and not necessarily by the one receiving it.

Strategies

Horizontal (default)

spawn calls will be served by the least burdened Agent, i.e. the

Agent with the least utilization of its slots.

This strategy helps to keep the overall Grid health good by spreading the workload across as many nodes as possible.

Vertical

spawn calls will be served by the most burdened Agent, i.e. the

Agent with the most utilization of its slots.

This strategy helps to keep the overall Grid size (and thus cost) low by utilizing as few Grid nodes as possible.

It will also let you know if you have over-provisioned as extra nodes will not be receiving any workload.

Examples

Server

In one terminal run:

bin/spectre_agent

In another terminal run:

bin/spectre_agent --port=7332 --peer=127.0.0.1:7331

In another terminal run:

bin/spectre_agent --port=7333 --peer=127.0.0.1:7332

(It doesn’t matter who the peer is as long as it’s part of the Grid.)

Now we have a Grid of 3 Agents.

The point of course is to run each Agent on a different machine in real life.

Client

Same as Agent client.

Scheduler

To start a Scheduler run the spectre_scheduler CLI executable.

To see all available options run:

bin/spectre_scheduler -h

The main role of the Scheduler is to:

- Queue scans based on their assigned priority.

- Run them if there is an available slot.

- Monitor their progress.

- Grab and store reports once scans complete.

Default

By default, scans will run on the same machine as the Scheduler.

With Agent

When a Agent has been provided, spawn calls are going to be issued

in order to acquire Instances to run the scans.

Grid

In the case where the given Agent is a Grid member, scans will be load-balanced across the Grid according the the configured strategy.

Examples

Server

In one terminal run:

bin/spectre_scheduler

Client

Pushing

In another terminal run:

bin/spectre_scheduler_push --scheduler-url=localhost:7331 http://testhtml5.vulnweb.com

Then you should see something like:

[~] Pushed scan with ID: 5fed6c50f3699bacb841cc468cc97094

Monitoring

To see what the Scheduler is doing run:

bin/spectre_scheduler_list localhost:7331

Then you should see something like:

[~] Queued [0]

[*] Running [1]

[1] 5fed6c50f3699bacb841cc468cc97094: 127.0.0.1:3390/070116f5e2c0acaa0a6432acdcc7230a

[+] Completed [0]

[-] Failed [0]

If you run the same command after a while and the scan has completed:

[~] Queued [0]

[*] Running [0]

[+] Completed [1]

[1] 5fed6c50f3699bacb841cc468cc97094: /home/username/.cuboid/reports/5fed6c50f3699bacb841cc468cc97094.crf

[-] Failed [0]

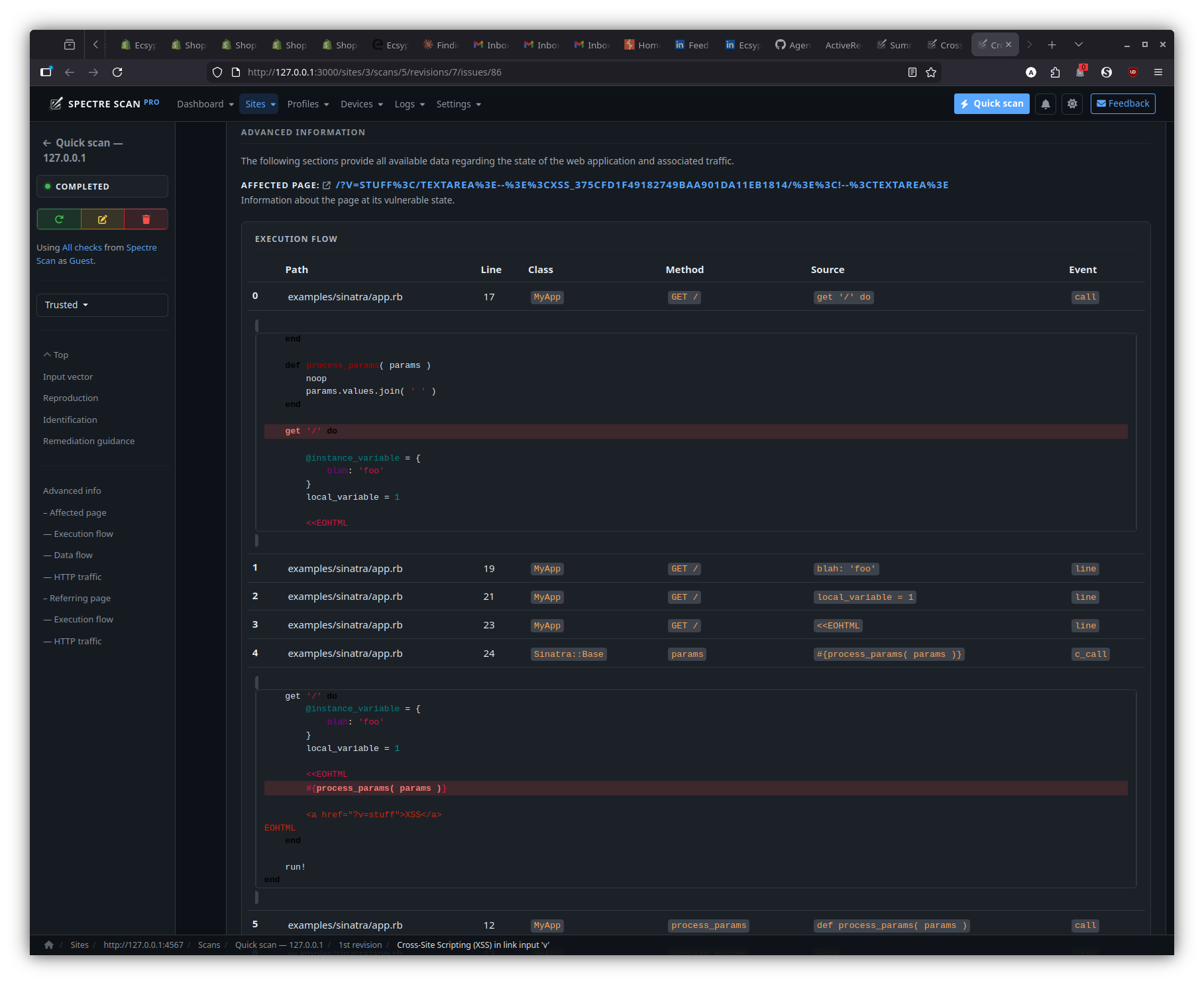

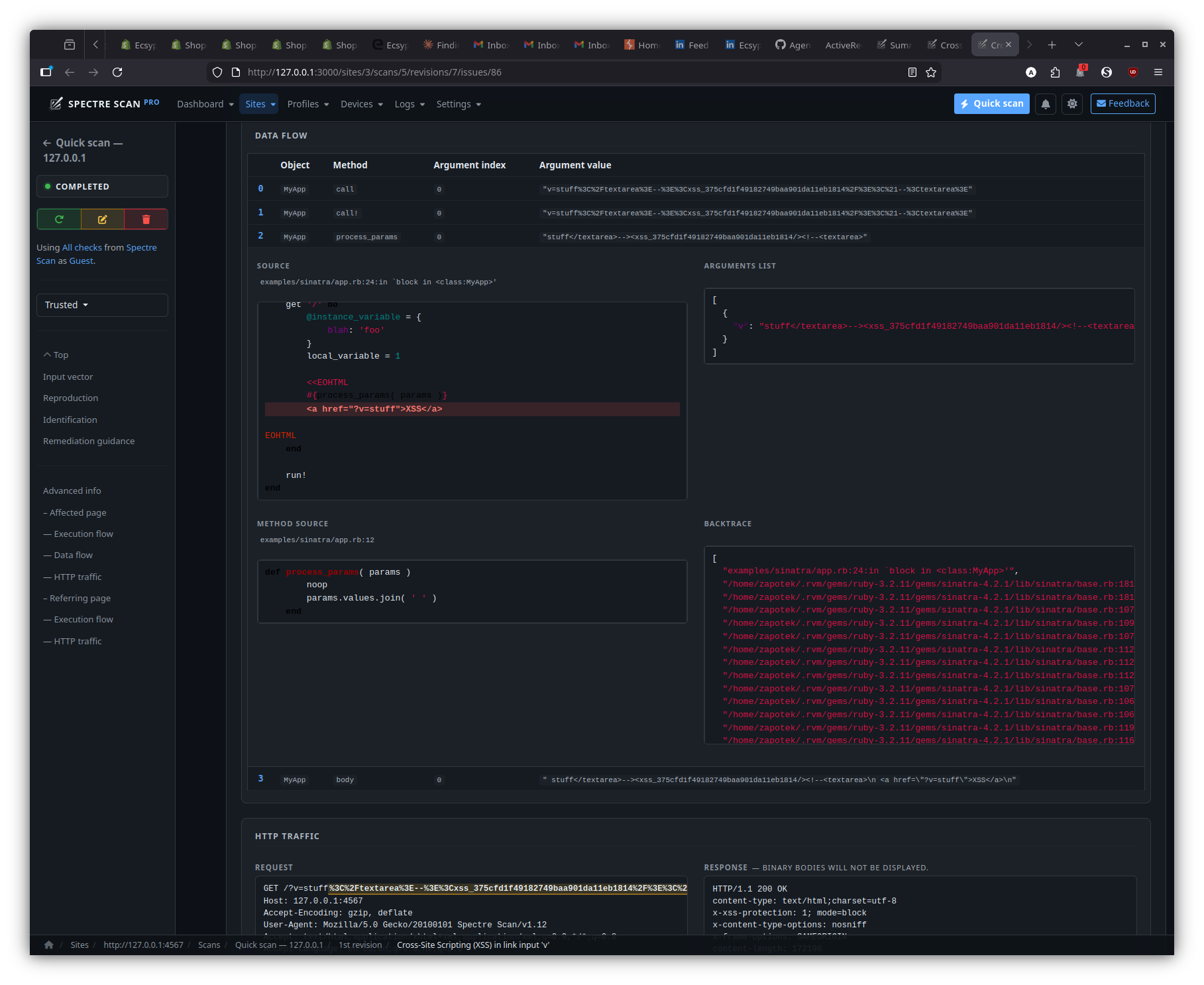

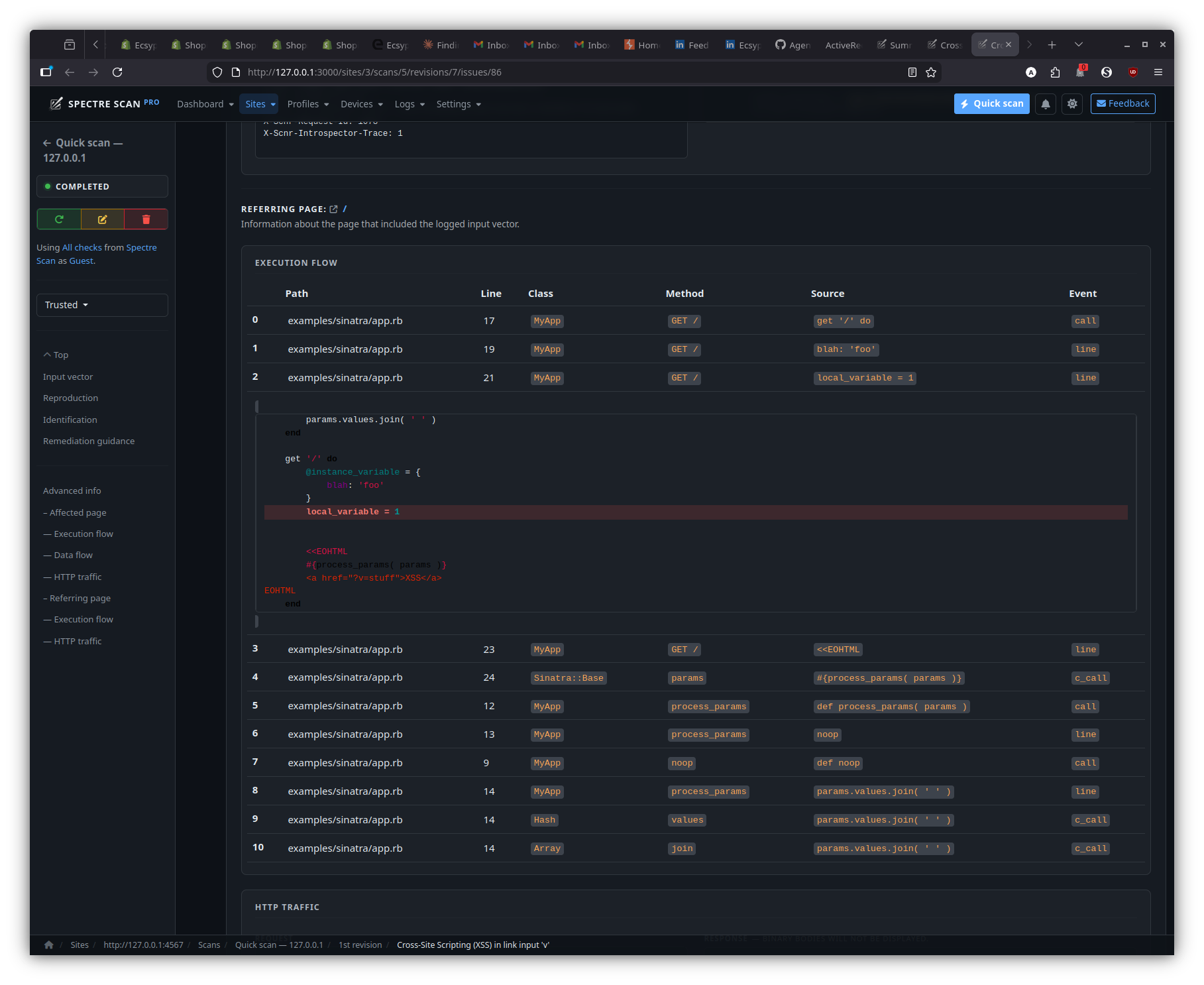

Introspector

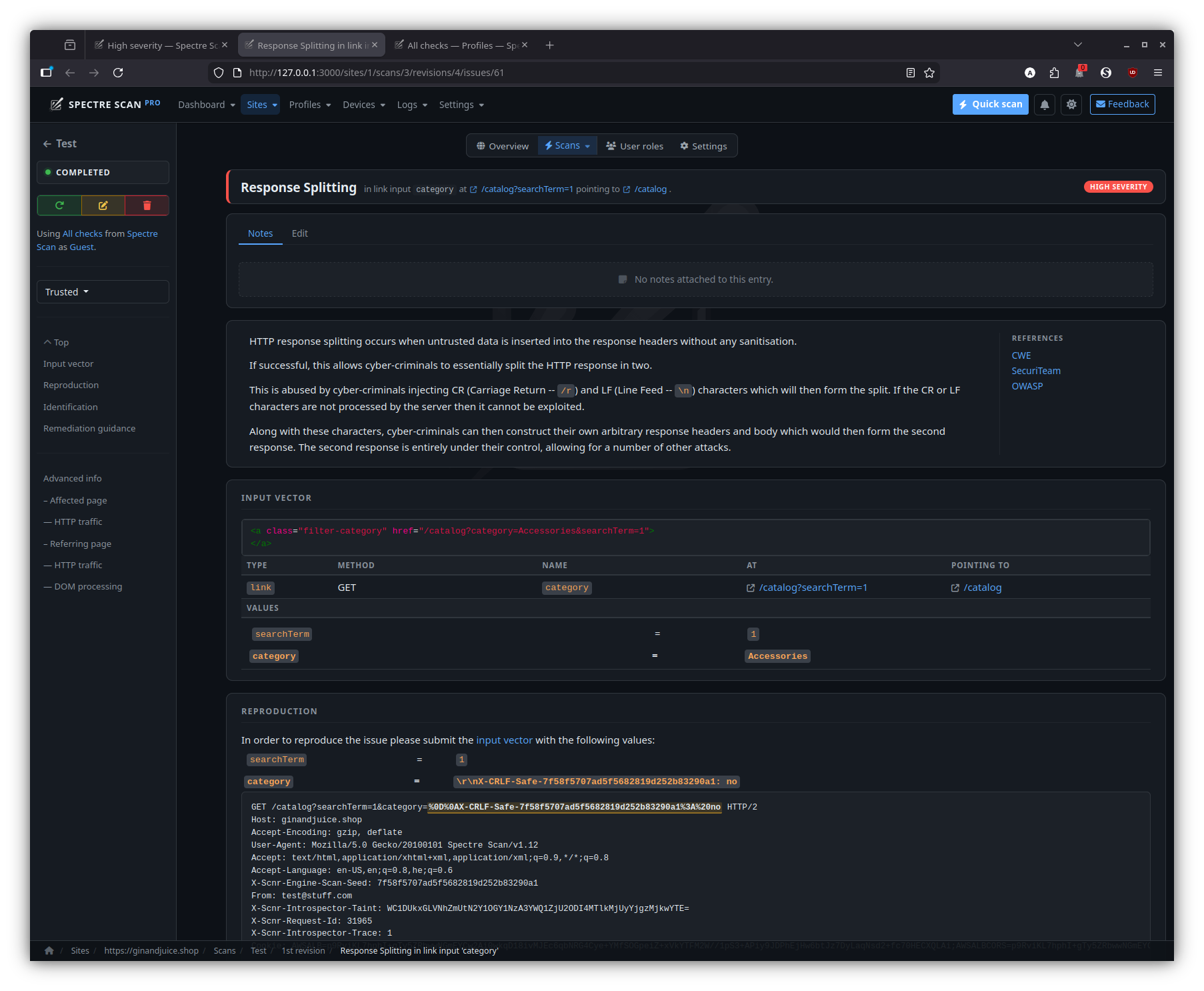

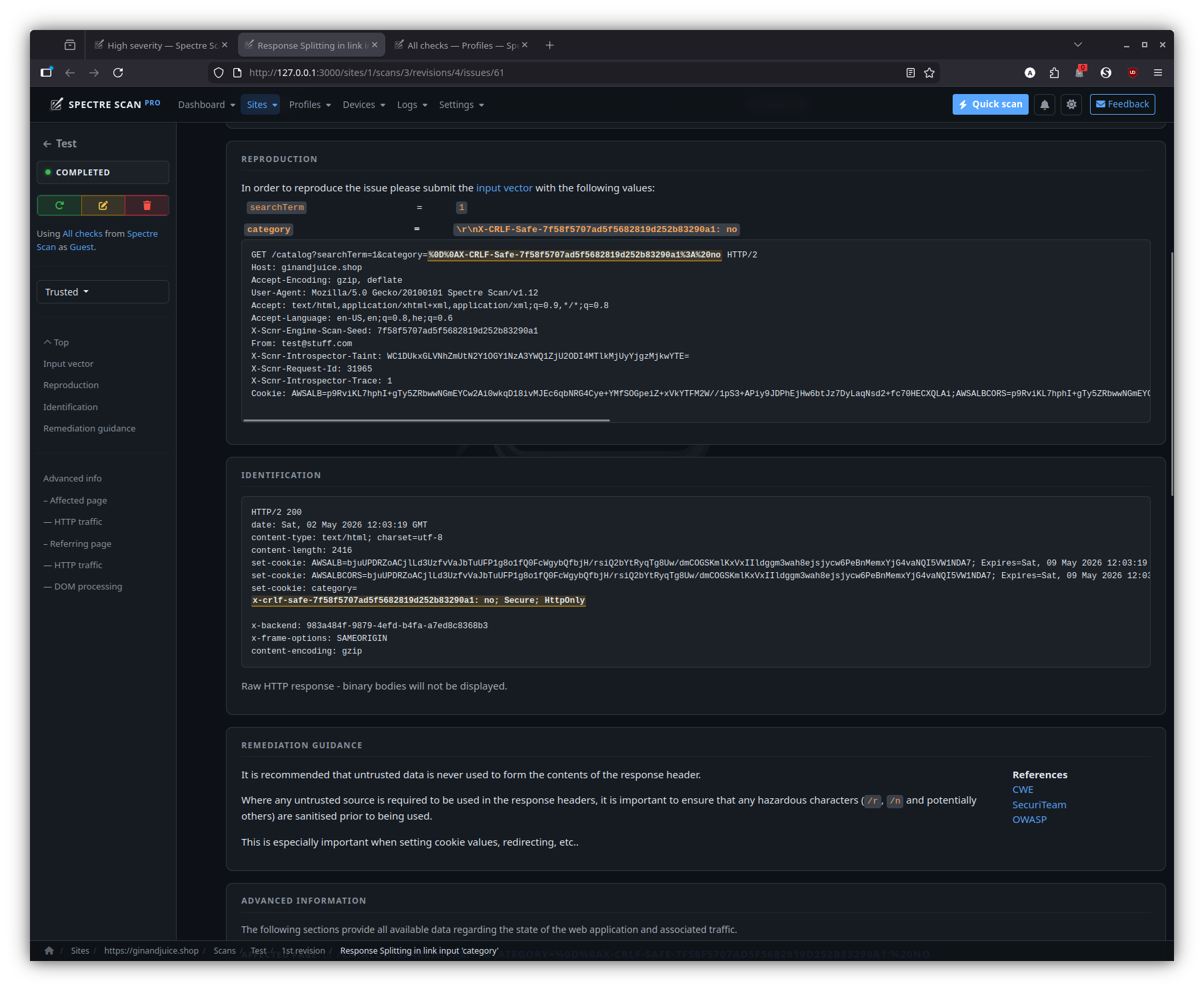

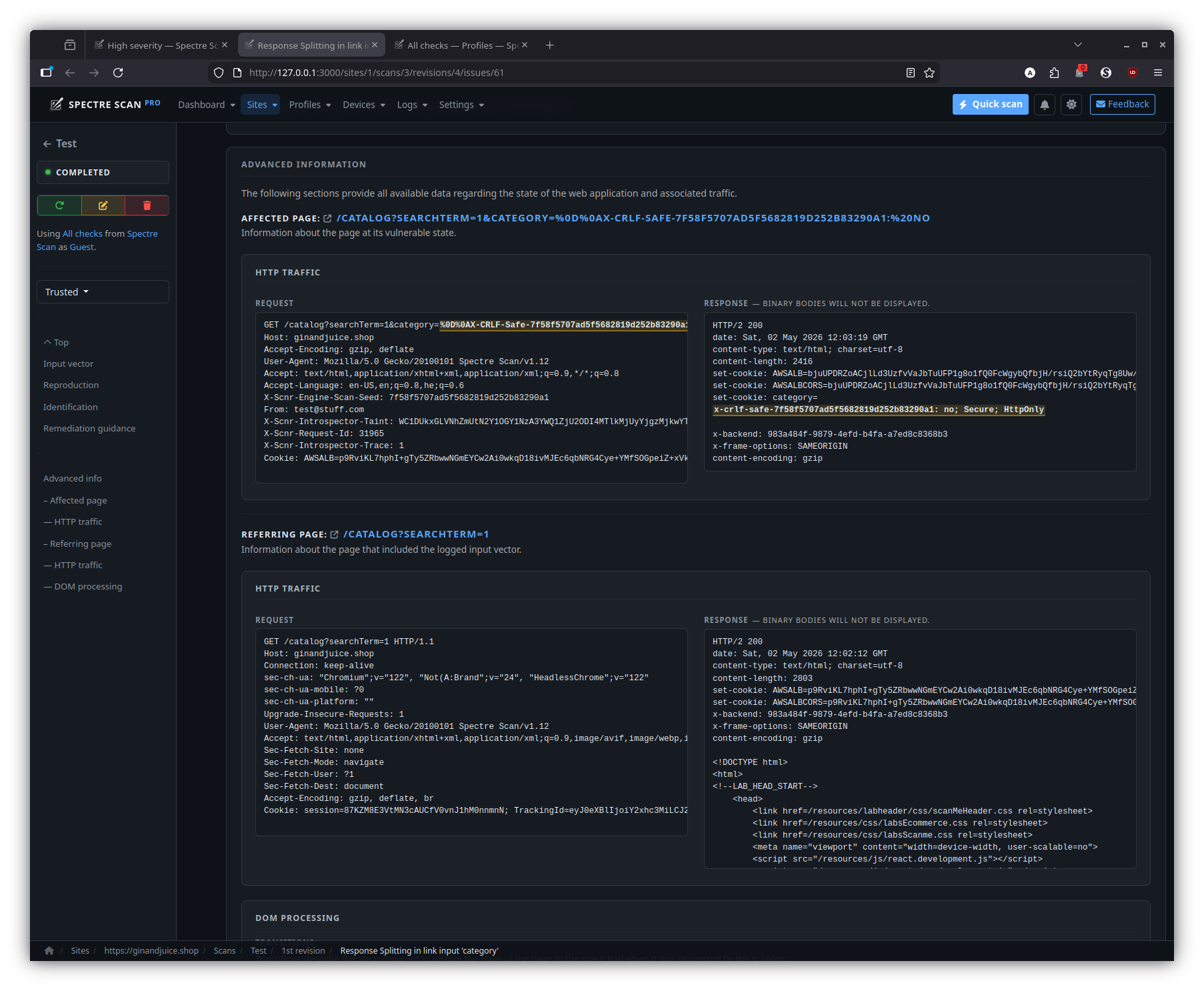

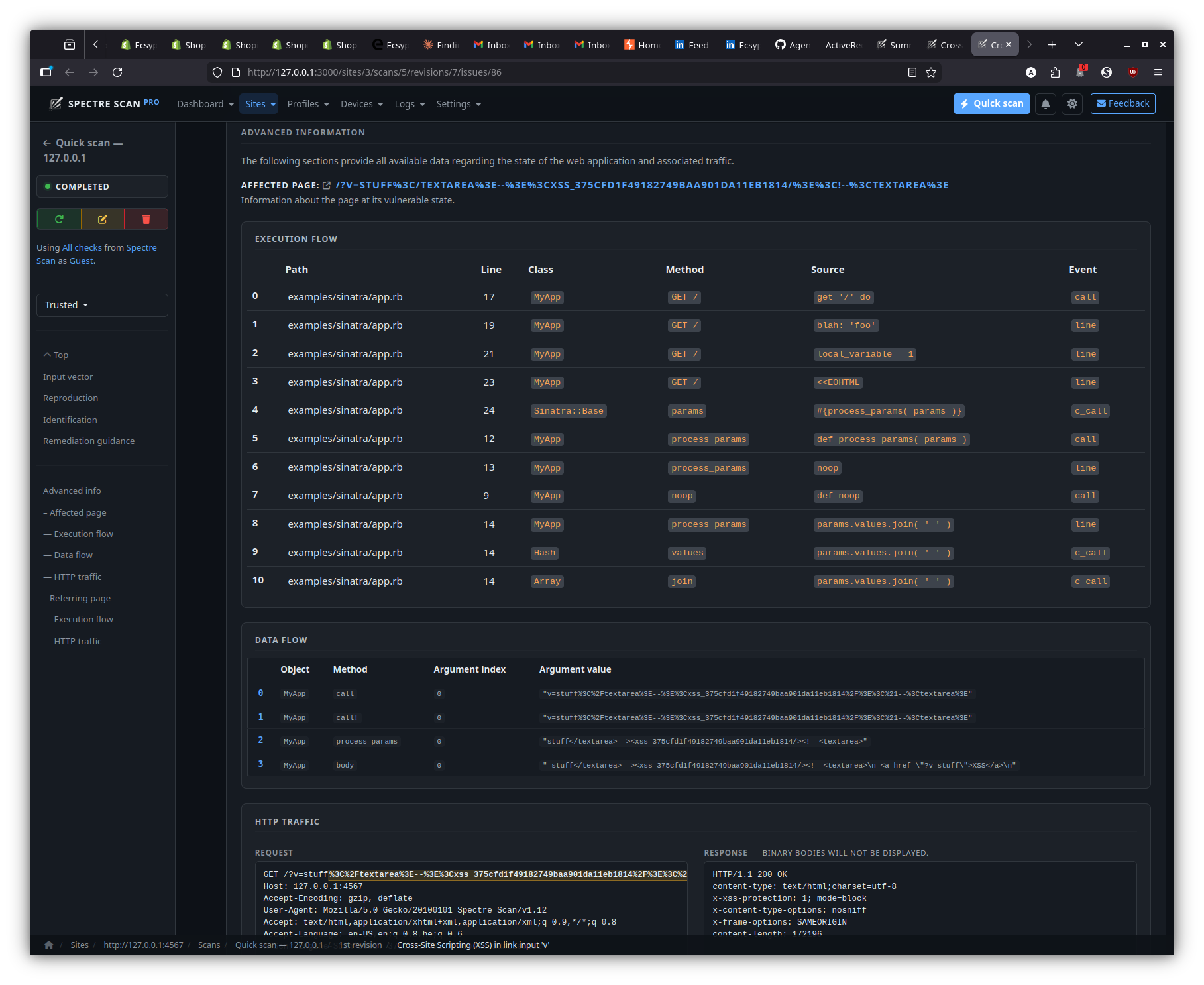

The Spectre Scan Introspector is basically middleware for your web application.

When this middleware is used, advanced execution and data flow information about the web application is gathered, allowing for easier identification and remediation of each identified issue.

Ruby

Install

gem install scnr-introspector

Use middleware

Options

| Option | Description | Default | Example |

|---|---|---|---|

path_start_with | Only instrument classes whose path starts with this prefix | none | example/ |

path_ends_with | Only instrument classes whose path ends with this suffix | none | app.rb |

path_include_patterns | Only instrument classes whose path matches all regex patterns | none | .*service.* |

path_exclude_patterns | Exclude classes matching whose path matches any regex patterns | none | .*test.* |

app.rb:

require 'scnr/introspector' # Include!

require 'sinatra/base'

class MyApp < Sinatra::Base

# Use!

use SCNR::Introspector, scope: {

path_start_with: __FILE__

}

def noop

end

def process_params( params )

noop

params.values.join( ' ' )

end

get '/' do

@instance_variable = {

blah: 'foo'

}

local_variable = 1

<<EOHTML

#{process_params( params )}

<a href="?v=stuff">XSS</a>

EOHTML

end

run!

end

Verify

Run the Web App:

bundle exec ruby examples/sinatra/app.rb

You should see this at the beginning:

[INTROSPECTOR] Spectre Scan Introspector Initialized.

Along with these types of messages:

[INTROSPECTOR] Injecting trace code for MyApp#process_params in examples/sinatra/app.rb:12

As an integration test, you can run:

curl -i http://localhost:4567/ -H "X-Scnr-Engine-Scan-Seed:Test" -H "X-Scnr-Introspector-Trace:1" -H "X-SCNR-Request-ID:1"

You should see something like this (the comments are the important part):

HTTP/1.1 200 OK

Content-Type: text/html;charset=utf-8

X-XSS-Protection: 1; mode=block

X-Content-Type-Options: nosniff

X-Frame-Options: SAMEORIGIN

Content-Length: 7055

<a href="?v=stuff">XSS</a>

<!-- Test

{"execution_flow":{"points":[{"path":"examples/sinatra/app.rb","line_number":17,"class_name":"MyApp","method_name":"GET /","event":"call","source":" get '/' do\n","file_contents":"require 'scnr/introspector'\nrequire 'sinatra/base'\n\nclass MyApp < Sinatra::Base\n use SCNR::Introspector, scope: {\n path_start_with: __FILE__\n }\n\n def noop\n end\n\n def process_params( params )\n noop\n params.values.join( ' ' )\n end\n\n get '/' do\n @instance_variable = {\n blah: 'foo'\n }\n local_variable = 1\n\n <<EOHTML\n #{process_params( params )}\n <a href=\"?v=stuff\">XSS</a>\nEOHTML\n end\n\n run!\nend\n"},{"path":"examples/sinatra/app.rb","line_number":19,"class_name":"MyApp","method_name":"GET /","event":"line","source":" blah: 'foo'\n","file_contents":"require 'scnr/introspector'\nrequire 'sinatra/base'\n\nclass MyApp < Sinatra::Base\n use SCNR::Introspector, scope: {\n path_start_with: __FILE__\n }\n\n def noop\n end\n\n def process_params( params )\n noop\n params.values.join( ' ' )\n end\n\n get '/' do\n @instance_variable = {\n blah: 'foo'\n }\n local_variable = 1\n\n <<EOHTML\n #{process_params( params )}\n <a href=\"?v=stuff\">XSS</a>\nEOHTML\n end\n\n run!\nend\n"},{"path":"examples/sinatra/app.rb","line_number":21,"class_name":"MyApp","method_name":"GET /","event":"line","source":" local_variable = 1\n","file_contents":"require 'scnr/introspector'\nrequire 'sinatra/base'\n\nclass MyApp < Sinatra::Base\n use SCNR::Introspector, scope: {\n path_start_with: __FILE__\n }\n\n def noop\n end\n\n def process_params( params )\n noop\n params.values.join( ' ' )\n end\n\n get '/' do\n @instance_variable = {\n blah: 'foo'\n }\n local_variable = 1\n\n <<EOHTML\n #{process_params( params )}\n <a href=\"?v=stuff\">XSS</a>\nEOHTML\n end\n\n run!\nend\n"},{"path":"examples/sinatra/app.rb","line_number":23,"class_name":"MyApp","method_name":"GET /","event":"line","source":" <<EOHTML\n","file_contents":"require 'scnr/introspector'\nrequire 'sinatra/base'\n\nclass MyApp < Sinatra::Base\n use SCNR::Introspector, scope: {\n path_start_with: __FILE__\n }\n\n def noop\n end\n\n def process_params( params )\n noop\n params.values.join( ' ' )\n end\n\n get '/' do\n @instance_variable = {\n blah: 'foo'\n }\n local_variable = 1\n\n <<EOHTML\n #{process_params( params )}\n <a href=\"?v=stuff\">XSS</a>\nEOHTML\n end\n\n run!\nend\n"},{"path":"examples/sinatra/app.rb","line_number":12,"class_name":"MyApp","method_name":"process_params","event":"call","source":" def process_params( params )\n","file_contents":"require 'scnr/introspector'\nrequire 'sinatra/base'\n\nclass MyApp < Sinatra::Base\n use SCNR::Introspector, scope: {\n path_start_with: __FILE__\n }\n\n def noop\n end\n\n def process_params( params )\n noop\n params.values.join( ' ' )\n end\n\n get '/' do\n @instance_variable = {\n blah: 'foo'\n }\n local_variable = 1\n\n <<EOHTML\n #{process_params( params )}\n <a href=\"?v=stuff\">XSS</a>\nEOHTML\n end\n\n run!\nend\n"},{"path":"examples/sinatra/app.rb","line_number":13,"class_name":"MyApp","method_name":"process_params","event":"line","source":" noop\n","file_contents":"require 'scnr/introspector'\nrequire 'sinatra/base'\n\nclass MyApp < Sinatra::Base\n use SCNR::Introspector, scope: {\n path_start_with: __FILE__\n }\n\n def noop\n end\n\n def process_params( params )\n noop\n params.values.join( ' ' )\n end\n\n get '/' do\n @instance_variable = {\n blah: 'foo'\n }\n local_variable = 1\n\n <<EOHTML\n #{process_params( params )}\n <a href=\"?v=stuff\">XSS</a>\nEOHTML\n end\n\n run!\nend\n"},{"path":"examples/sinatra/app.rb","line_number":9,"class_name":"MyApp","method_name":"noop","event":"call","source":" def noop\n","file_contents":"require 'scnr/introspector'\nrequire 'sinatra/base'\n\nclass MyApp < Sinatra::Base\n use SCNR::Introspector, scope: {\n path_start_with: __FILE__\n }\n\n def noop\n end\n\n def process_params( params )\n noop\n params.values.join( ' ' )\n end\n\n get '/' do\n @instance_variable = {\n blah: 'foo'\n }\n local_variable = 1\n\n <<EOHTML\n #{process_params( params )}\n <a href=\"?v=stuff\">XSS</a>\nEOHTML\n end\n\n run!\nend\n"},{"path":"examples/sinatra/app.rb","line_number":14,"class_name":"MyApp","method_name":"process_params","event":"line","source":" params.values.join( ' ' )\n","file_contents":"require 'scnr/introspector'\nrequire 'sinatra/base'\n\nclass MyApp < Sinatra::Base\n use SCNR::Introspector, scope: {\n path_start_with: __FILE__\n }\n\n def noop\n end\n\n def process_params( params )\n noop\n params.values.join( ' ' )\n end\n\n get '/' do\n @instance_variable = {\n blah: 'foo'\n }\n local_variable = 1\n\n <<EOHTML\n #{process_params( params )}\n <a href=\"?v=stuff\">XSS</a>\nEOHTML\n end\n\n run!\nend\n"},{"path":"examples/sinatra/app.rb","line_number":14,"class_name":"Hash","method_name":"values","event":"c_call","source":" params.values.join( ' ' )\n","file_contents":"require 'scnr/introspector'\nrequire 'sinatra/base'\n\nclass MyApp < Sinatra::Base\n use SCNR::Introspector, scope: {\n path_start_with: __FILE__\n }\n\n def noop\n end\n\n def process_params( params )\n noop\n params.values.join( ' ' )\n end\n\n get '/' do\n @instance_variable = {\n blah: 'foo'\n }\n local_variable = 1\n\n <<EOHTML\n #{process_params( params )}\n <a href=\"?v=stuff\">XSS</a>\nEOHTML\n end\n\n run!\nend\n"},{"path":"examples/sinatra/app.rb","line_number":14,"class_name":"Array","method_name":"join","event":"c_call","source":" params.values.join( ' ' )\n","file_contents":"require 'scnr/introspector'\nrequire 'sinatra/base'\n\nclass MyApp < Sinatra::Base\n use SCNR::Introspector, scope: {\n path_start_with: __FILE__\n }\n\n def noop\n end\n\n def process_params( params )\n noop\n params.values.join( ' ' )\n end\n\n get '/' do\n @instance_variable = {\n blah: 'foo'\n }\n local_variable = 1\n\n <<EOHTML\n #{process_params( params )}\n <a href=\"?v=stuff\">XSS</a>\nEOHTML\n end\n\n run!\nend\n"}]},"platforms":["ruby","linux"]}

-->

Java

Options

| Option | Description | Default | Example |

|---|---|---|---|

path_start_with | Only instrument classes whose path starts with this prefix | none | com/example |

path_ends_with | Only instrument classes whose path ends with this suffix | none | Controller |

path_include_pattern | Only instrument classes matching this regex pattern | none | .*Service.* |

path_exclude_pattern | Exclude classes matching this regex pattern | none | .*Test.* |

source_directory | Root directory containing source files | src/main/java/ | /path/to/src |

Download

Download the latest JAR archive.

Install Middleware

webapp/WEB-INF/web.xml:

<?xml version="1.0" encoding="UTF-8"?>

<web-app xmlns="http://xmlns.jcp.org/xml/ns/javaee"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://xmlns.jcp.org/xml/ns/javaee

http://xmlns.jcp.org/xml/ns/javaee/web-app_4_0.xsd"

version="4.0">

<filter>

<filter-name>introspectorFilter</filter-name>

<filter-class>com.ecsypno.introspector.middleware.IntrospectorFilter</filter-class>

</filter>

<filter-mapping>

<filter-name>introspectorFilter</filter-name>

<url-pattern>/*</url-pattern>

</filter-mapping>

</web-app>

Load Agent along with your webapp

MAVEN_OPTS="-javaagent:introspector.jar=path_start_with=com/example" mvn clean package tomcat7:run

Verify

You should see this at the beginning:

[INTROSPECTOR] Initializing Spectre Scan Introspector agent...

[INTROSPECTOR] Setting up instrumentation.

And messages like this after initialization for each traced line:

[INTROSPECTOR] Injecting trace code for com/example/XssServlet.<init> line 11 in src/main/java//com/example/XssServlet.java

Finally, for an integration test, to make sure:

curl -i http://localhost:8080/ -H "X-Scnr-Engine-Scan-Seed:Test" -H "X-Scnr-Introspector-Trace:1" -H "X-SCNR-Request-ID:1"

At the end of the HTTP response you should be seeing something like:

<!-- Test

{

"platforms": ["java"],

"execution_flow": {

"points": [

{

"method_name": "doGet",

"class_name": "com/example/SampleWebApp",

"path": "src/main/java//com/example/SampleWebApp.java",

"line_number": 16,

"source": " resp.setContentType(\"text/html\");",

"file_contents": "package com.example;\n\nimport java.io.IOException;\nimport javax.servlet.ServletException;\nimport javax.servlet.annotation.WebServlet;\nimport javax.servlet.http.HttpServlet;\nimport javax.servlet.http.HttpServletRequest;\nimport javax.servlet.http.HttpServletResponse;\n\n@WebServlet(\"/\")\npublic class SampleWebApp extends HttpServlet {\n @Override\n protected void doGet(HttpServletRequest req, HttpServletResponse resp) \n throws ServletException, IOException {\n\n resp.setContentType(\"text/html\");\n\n resp.getWriter().println(\"<html><body>\");\n resp.getWriter().println(\"<ul>\");\n resp.getWriter().println(\"<li><a href='/xss'>XSS</a></li>\");\n resp.getWriter().println(\"<li><a href='/cmd'>OS Command Injection</a></li>\");\n resp.getWriter().println(\"</ul>\");\n resp.getWriter().println(\"</body></html>\");\n }\n}"

},

[...]

]

}

}

Test -->

.NET

Installation

Install Middleware

Add to your project:

dotnet add package Introspector.Web

Install patcher

dotnet tool install --global Introspector.CLI

introspector

Use in a Web Application

Ecsypno.TestApp.csproj:

<ItemGroup>

<PackageReference Include="Introspector.Web"/>

</ItemGroup>

Ecsypno.TestApp.cs:

using Introspector.Web.Extensions;

using System.Web;

var builder = WebApplication.CreateBuilder(args);

var app = builder.Build();

// Add Introspector middleware

app.UseIntrospector();

string ProcessQuery(string input)

{

return input;

}

app.MapGet("/", () => "Hello, world!");

app.MapGet("/xss", (HttpContext context) =>

{

var query = ProcessQuery(context.Request.Query["input"]);

var response = $@"

<html>

<body>

<h1>XSS Example</h1>

<form method='get' action='/xss'>

<label for='input'>Input:</label>

<input type='text' id='input' name='input' value='{query}' />

<button type='submit'>Submit</button>

</form>

<p>{query}</p>

</body>

</html>";

context.Response.ContentType = "text/html";

return response;

});

app.Run();

dotnet run --project Ecsypno.TestApp -c Release

Should output [INTROSPECTOR] Spectre Scan Introspector middleware initialized. at the top.

Patch

dotnet build Ecsypno.TestApp -c Release # Build first.

introspector Ecsypno.TestApp/bin/Release/ --path-ends-with Ecsypno.TestApp.dll --path-exclude-pattern "ref|obj"

# Processing: Ecsypno.TestApp/bin/Release/net8.0/Ecsypno.TestApp.dll

# Instrumenting Program.<Main>$( args )

# Instrumenting Program.<Main>$ at /home/zapotek/workspace/scnr/dotnet-instrumentation-example/Ecsypno.TestApp/Program.cs:4

# Instrumenting Program.<Main>$ at /home/zapotek/workspace/scnr/dotnet-instrumentation-example/Ecsypno.TestApp/Program.cs:6

# Instrumenting Program.<Main>$ at /home/zapotek/workspace/scnr/dotnet-instrumentation-example/Ecsypno.TestApp/Program.cs:9

# Instrumenting Program.<Main>$ at /home/zapotek/workspace/scnr/dotnet-instrumentation-example/Ecsypno.TestApp/Program.cs:17

# Instrumenting Program.<Main>$ at /home/zapotek/workspace/scnr/dotnet-instrumentation-example/Ecsypno.TestApp/Program.cs:20

# Instrumenting Program.<Main>$ at /home/zapotek/workspace/scnr/dotnet-instrumentation-example/Ecsypno.TestApp/Program.cs:39

# Instrumenting Program.<<Main>$>g__ProcessQuery|0_0( input )

# Instrumenting Program.<<Main>$>g__ProcessQuery|0_0 at /home/zapotek/workspace/scnr/dotnet-instrumentation-example/Ecsypno.TestApp/Program.cs:14

Verify

Run the Web App again:

dotnet run --project Ecsypno.TestApp -c Release --no-build

Should output:

[INTROSPECTOR] Patched assembly loaded: Ecsypno.TestApp/bin/Release/net8.0/Ecsypno.TestApp.dll

[INTROSPECTOR] Spectre Scan Introspector middleware initialized.

curl -i http://localhost:5055/xss?input=test -H "X-Scnr-Engine-Scan-Seed:Test" -H "X-Scnr-Introspector-Trace:1" -H "X-SCNR-Request-ID:1"

You should see something like this (the comments are the important part):

HTTP/1.1 200 OK

Content-Length: 2135

Content-Type: text/html

Date: Sat, 11 Jan 2025 10:22:46 GMT

Server: Kestrel

<html>

<body>

<h1>XSS Example</h1>

<form method='get' action='/xss'>

<label for='input'>Input:</label>

<input type='text' id='input' name='input' value='test' />

<button type='submit'>Submit</button>

</form>

<p>test</p>

</body>

</html>

<!-- Test

{

"data_flow": [],

"execution_flow": {

"points": [

{

"class_name": "Program",

"method_name": "\u003C\u003CMain\u003E$\u003Eg__ProcessQuery|0_0",

"path": "/home/zapotek/workspace/scnr/dotnet-instrumentation-example/Ecsypno.TestApp/Program.cs",

"line_number": 14,

"source": " return input;",

"file_contents": "using Introspector.Web.Extensions;\nusing System.Web;\n\nvar builder = WebApplication.CreateBuilder(args);\n\nvar app = builder.Build();\n\n// Add Introspector middleware\napp.UseIntrospector();\n\nstring ProcessQuery(string input)\n{\n\n return input;\n}\n\napp.MapGet(\u0022/\u0022, () =\u003E \u0022Hello, world!\u0022);\n\n// Add an XSS example route with a form\napp.MapGet(\u0022/xss\u0022, (HttpContext context) =\u003E\n{\n var query = ProcessQuery(context.Request.Query[\u0022input\u0022]);\n var response = $@\u0022\n \u003Chtml\u003E\n \u003Cbody\u003E\n \u003Ch1\u003EXSS Example\u003C/h1\u003E\n \u003Cform method=\u0027get\u0027 action=\u0027/xss\u0027\u003E\n \u003Clabel for=\u0027input\u0027\u003EInput:\u003C/label\u003E\n \u003Cinput type=\u0027text\u0027 id=\u0027input\u0027 name=\u0027input\u0027 value=\u0027{query}\u0027 /\u003E\n \u003Cbutton type=\u0027submit\u0027\u003ESubmit\u003C/button\u003E\n \u003C/form\u003E\n \u003Cp\u003E{query}\u003C/p\u003E\n \u003C/body\u003E\n \u003C/html\u003E\u0022;\n context.Response.ContentType = \u0022text/html\u0022;\n return response;\n});\n\napp.Run();"

}

]

},

"platforms": [

"aspx"

]

}

Test -->

CLI

Command-line interface executables can be found under the bin/ directory and

at the time of writing are:

Basic, Pro, Enterprise

spectre– Direct scanning utility.spectre_reporter– Generates reports from.crf(Cuboid report file) and.ser(Spectre Scan report) report files.spectre_reproduce– Reproduces an issue(s) from a given report.spectre_restore– Restores a suspended scan based on a snapshot file.spectre_script– Runs a Ruby script under the context ofSCNR::Engine.

Pro

spectre_pro– Starts a Web interface server.

REST, SDLC, Enterprise

spectre_rest_server– Starts a REST server.

MCP, SDLC, Enterprise

spectre_mcp_server– Starts an MCP server.

Enterprise-only

spectre_spawn– Issuesspawncalls to Agents to start scans remotely.spectre_agent– Starts a Agent.spectre_scheduler– Starts a Scheduler.

Clients - no edition checks

spectre_agent_monitor– Monitors an Agent.spectre_agent_unplug– Unplugs an Agent from its Grid.spectre_instance_connect– Utility to connect to an Instance.spectre_scheduler_attach– Attaches a detached Instance to the given Scheduler.spectre_scheduler_clear– Clears the Scheduler queue.spectre_scheduler_detach– Detaches an Instance from the Scheduler.spectre_scheduler_get– Retrieves information for a scheduled scan.spectre_scheduler_list– Lists information about all scans under the Scheduler’s control.spectre_scheduler_push– Scheduled a scan.spectre_scheduler_remove– Removes a scheduled scan from the queue.

License utilities

spectre_activatespectre_editionspectre_available_seatsspectre_license info

Other

spectre_system_info– Presents system information about the host.

Ruby API

The Ruby API utilizes the DSeL DSL/API generator and runner and allows you to:

- Configure scans.

- Add custom components on the fly.

- Create custom scanners.

The API is separated into the following segments:

# Runs the DSL.

SCNR::Engine::API.run do

Data {

Sitemap {}

Urls {}

Pages {}

Issues {}

}

State { }

Browserpool { }

Dom { }

Http { }

Input { }

Logging { }

Checks { }

Plugins { }

Fingerprinters { }

Scan {

Options { }

Scope { }

Session { }

}

end

Examples

As configuration

SCNR::Engine::API.run do

Dom {

# Allow some time for the modal animation to complete in order for

# the login form to appear.

#

# (Not actually necessary, this is just an example on how to hande quirks.)

on :event do |_, locator, event, *|

next if locator.attributes['href'] != '#myModal' || event != :click

sleep 1

end

}

Checks {

# This will run from the context of SCNR::Engine::Check::Base; it

# basically creates a new check component on the fly.

#

# Does something really simple, logs an issue for each 404 page.

as :not_found,

issue: {

name: 'Page not found',

severity: SCNR::Engine::Issue::Severity::INFORMATIONAL

} do

response = page.response

next if response.code != 404

log(

proof: response.status_line,

vector: SCNR::Engine::Element::Server.new( response.url ),

response: response

)

end

}

Plugins {

# This will run from the context of SCNR::Engine::Plugin::Base; it

# basically creates a new plugin component on the fly.

as :my_plugin do

# Do stuff then wait until scan completes.

wait_while_framework_running

# Do stuff after scan completes.

end

}

Scan {

Session {

to :login do |browser|

# Login with whichever interface you prefer.

watir = browser.watir

selenium = browser.selenium

watir.goto SCNR::Engine::Options.url

watir.link( href: '#myModal' ).click

form = watir.form( id: 'loginForm' )

form.text_field( name: 'username' ).set 'admin'

form.text_field( name: 'password' ).set 'admin'

form.submit

end

to :check do |async|

http_client = SCNR::Engine::HTTP::Client

check = proc { |r| r.body.optimized_include? '<b>admin' }

# If an async block is passed, then the framework would rather

# schedule it to run asynchronously.

if async

http_client.get SCNR::Engine::Options.url do |response|

success = check.call( response )

async.call success

end

else

response = http_client.get( SCNR::Engine::Options.url, mode: :sync )

check.call( response )

end

end

}

Scope {

# Don't visit resources that will end the session.

reject :url do |url|

url.path.optimized_include?( 'login' ) ||

url.path.optimized_include?( 'logout' )

end

}

}

end

Supposing the above is saved as html5.config.rb:

bin/spectre http://testhtml5.vulnweb.com --script=html5.config.rb

Standalone

This basically creates a custom scanner.

require 'scnr/engine/api'

# Mute output messages from the CLI interface, we've got our own output methods.

SCNR::UI::CLI::Output.mute

SCNR::Engine::API.run do

State {

on :change do |state|

puts "State\t\t- #{state.status.capitalize}"

end

}

Data {

Issues {

on :new do |issue|

puts "Issue\t\t- #{issue.name} from `#{issue.referring_page.dom.url}`" <<

" in `#{issue.vector.type}`."

end

}

}

Logging {

on :error do |error|

$stderr.puts "Error\t\t- #{error}"

end

# Way too much noise.

# on :exception do |exception|

# ap exception

# ap exception.backtrace

# end

}

Dom {

# Allow some time for the modal animation to complete in order for

# the login form to appear.

#

# (Not actually necessary, this is just an example on how to hande quirks.)

on :event do |_, locator, event, *|

next if locator.attributes['href'] != '#myModal' || event != :click

sleep 1

end

}

Checks {

# This will run from the context of SCNR::Engine::Check::Base; it

# basically creates a new check component on the fly.

#

# Does something really simple, logs an issue for each 404 page.

as :not_found,

issue: {

name: 'Page not found',

severity: SCNR::Engine::Issue::Severity::INFORMATIONAL

} do

response = page.response

next if response.code != 404

log(

proof: response.status_line,

vector: SCNR::Engine::Element::Server.new( response.url ),

response: response

)

end

}

Plugins {

# This will run from the context of SCNR::Engine::Plugin::Base; it

# basically creates a new plugin component on the fly.

as :my_plugin do

puts "#{shortname}\t- Running..."

wait_while_framework_running

puts "#{shortname}\t- Done!"

end

}

Scan {

Options {

set url: 'http://testhtml5.vulnweb.com',

audit: {

elements: [:links, :forms, :cookies]

},

checks: ['*']

}

Session {

to :login do |browser|

print "Session\t\t- Logging in..."

# Login with whichever interface you prefer.

watir = browser.watir

selenium = browser.selenium

watir.goto SCNR::Engine::Options.url

watir.link( href: '#myModal' ).click

form = watir.form( id: 'loginForm' )

form.text_field( name: 'username' ).set 'admin'

form.text_field( name: 'password' ).set 'admin'

form.submit

if browser.response.body =~ /<b>admin/

puts 'done!'

else

puts 'failed!'

end

end

to :check do |async|

print "Session\t\t- Checking..."

http_client = SCNR::Engine::HTTP::Client

check = proc { |r| r.body.optimized_include? '<b>admin' }

# If an async block is passed, then the framework would rather

# schedule it to run asynchronously.

if async

http_client.get SCNR::Engine::Options.url do |response|

success = check.call( response )

puts "logged #{success ? 'in' : 'out'}!"

async.call success

end

else

response = http_client.get( SCNR::Engine::Options.url, mode: :sync )

success = check.call( response )

puts "logged #{success ? 'in' : 'out'}!"

success

end

end

}

Scope {

# Don't visit resources that will end the session.

reject :url do |url|

url.path.optimized_include?( 'login' ) ||

url.path.optimized_include?( 'logout' )

end

}

before :page do |page|

puts "Processing\t- [#{page.response.code}] #{page.dom.url}"

end

on :page do |page|

puts "Scanning\t- [#{page.response.code}] #{page.dom.url}"

end

after :page do |page|

puts "Scanned\t\t- [#{page.response.code}] #{page.dom.url}"

end

run! do |report, statistics|

puts

puts '=' * 80

puts

puts "[#{report.sitemap.size}] Sitemap:"

puts

report.sitemap.sort_by { |url, _| url }.each do |url, code|

puts "\t[#{code}] #{url}"

end

puts

puts '-' * 80

puts

puts "[#{report.issues.size}] Issues:"

puts

report.issues.each.with_index do |issue, idx|

s = "\t[#{idx+1}] #{issue.name} in `#{issue.vector.type}`"

if issue.vector.respond_to?( :affected_input_name ) &&

issue.vector.affected_input_name

s << " input `#{issue.vector.affected_input_name}`"

end

puts s << '.'

puts "\t\tAt `#{issue.page.dom.url}` from `#{issue.referring_page.dom.url}`."

if issue.proof

puts "\t\tProof:\n\t\t\t#{issue.proof.gsub( "\n", "\n\t\t\t" )}"

end

puts

end

puts

puts '-' * 80

puts

puts "Statistics:"

puts

puts "\t" << statistics.ai.gsub( "\n", "\n\t" )

end

}

end

Supposing the above is saved as html5.scanner.rb:

bin/spectre_script html5.scanner.rb

Data

Encapsulates functionality that has to do with the data of SCNR::Engine.

SCNR::Engine::API.run do

Data {

Sitemap {}

Urls {}

Pages {}

Issues {}

}

end

Sitemap

SCNR::Engine::API.run do

Data {

Sitemap {

on :new do |entry|

p entry

# => { "http://example.com" => 200 }

# URL => HTTP code

end

}

}

end

Example

bin/spectre http://example.com/ --checks=- --script=sitemap.rb

Urls

SCNR::Engine::API.run do

Data {

Urls {

on :new do |url|

p url

# => "http://example.com"

end

}

}

end

Example

bin/spectre http://example.com/ --checks=- --script=urls.rb

Pages

SCNR::Engine::API.run do

Data {

Pages {

on :new do |page|

p page

# => #<SCNR::Engine::Page:7240 @url="http://testhtml5.vulnweb.com/ajax/popular?offset=0" @dom=#<SCNR::Engine::Page::DOM:7260 @url="http://testhtml5.vulnweb.com/ajax/popular?offset=0" @transitions=1 @data_flow_sinks=0 @execution_flow_sinks=0>>

end

}

}

end

Example

bin/spectre http://testhtml5.vulnweb.com/ --checks=- --script=pages.rb

Issues

SCNR::Engine::API.run do

Data {

Issues {

on :new do |issue|

p issue

# => #<SCNR::Engine::Issue:0x00007f8c50d825a0 @name="Allowed HTTP methods", @description="\nThere are a number of HTTP methods that can be used on a webserver (`OPTIONS`,\n`HEAD`, `GET`, `POST`, `PUT`, `DELETE` etc.). Each of these methods perform a\ndifferent function and each have an associated level of risk when their use is\npermitted on the webserver.\n\nA client can use the `OPTIONS` method within a request to query a server to\ndetermine which methods are allowed.\n\nCyber-criminals will almost always perform this simple test as it will give a\nvery quick indication of any high-risk methods being permitted by the server.\n\nSCNR::Engine discovered that several methods are supported by the server.\n", @references={"Apache.org"=>"http://httpd.apache.org/docs/2.2/mod/core.html#limitexcept"}, @tags=["http", "methods", "options"], @severity=#<SCNR::Engine::Issue::Severity::Base:0x00007f8c50dccee8 @severity=:informational>, @remedy_guidance="\nIt is recommended that a whitelisting approach be taken to explicitly permit the\nHTTP methods required by the application and block all others.\n\nTypically the only HTTP methods required for most applications are `GET` and\n`POST`. All other methods perform actions that are rarely required or perform\nactions that are inherently risky.\n\nThese risky methods (such as `PUT`, `DELETE`, etc) should be protected by strict\nlimitations, such as ensuring that the channel is secure (SSL/TLS enabled) and\nonly authorised and trusted clients are permitted to use them.\n", @check={:name=>"Allowed methods", :description=>"Checks for supported HTTP methods.", :elements=>[SCNR::Engine::Element::Server], :cost=>1, :author=>"Tasos \"Zapotek\" Laskos <[email protected]>", :version=>"0.2", :shortname=>"allowed_methods"}, @vector=#<SCNR::Engine::Element::Server url="http://example.com/">, @proof="OPTIONS, GET, HEAD, POST", @referring_page=#<SCNR::Engine::Page:6560 @url="http://example.com/" @dom=#<SCNR::Engine::Page::DOM:6580 @url="http://example.com/" @transitions=0 @data_flow_sinks=0 @execution_flow_sinks=0>>, @platform_name=nil, @platform_type=nil, @page=#<SCNR::Engine::Page:6600 @url="http://example.com/" @dom=#<SCNR::Engine::Page::DOM:6620 @url="http://example.com/" @transitions=0 @data_flow_sinks=0 @execution_flow_sinks=0>>, @remarks={}, @trusted=true>

end

# Disables Issue storage.

disable :storage

}

}

end

Example

bin/spectre http://example.com/ --checks=allowed_methods --script=issues.rb

State

SCNR::Engine::API.run do

State {

on :change do |state|

p state.status

# => :preparing

end

}

end

Example

bin/spectre http://example.com/ --checks=- --script=state.rb

Browserpool

SCNR::Engine::API.run do

Browserpool {

# When a job is queued.

on :job do |job|

p job

# => #<SCNR::Engine::BrowserPool::Jobs::DOMExploration:6140 @resource=#<SCNR::Engine::Page::DOM:6160 @url="http://testhtml5.vulnweb.com/" @transitions=0 @data_flow_sinks=0 @execution_flow_sinks=0> time= timed_out=false>

end

# When a job has completed.

on :job_done do |job|

p job

# => #<SCNR::Engine::BrowserPool::Jobs::DOMExploration:6140 @resource=#<SCNR::Engine::Page::DOM:6160 @url="http://testhtml5.vulnweb.com/" @transitions=0 @data_flow_sinks=0 @execution_flow_sinks=0> time=2.805975399 timed_out=false>

end

# When a job has yielded a result.

on :result do |result|

p result

# => #<SCNR::Engine::BrowserPool::Jobs::DOMExploration::Result:0x00007f51a167c218 @page=#<SCNR::Engine::Page:7340 @url="http://testhtml5.vulnweb.com/ajax/popular?offset=0" @dom=#<SCNR::Engine::Page::DOM:7360 @url="http://testhtml5.vulnweb.com/ajax/popular?offset=0" @transitions=1 @data_flow_sinks=0 @execution_flow_sinks=0>>, @job=#<SCNR::Engine::BrowserPool::Jobs::DOMExploration:7320 @resource= time= timed_out=false>>

end

}

end

Example

bin/spectre http://html5.vulnweb.com/ --checks=- --script=browserpool.rb

Dom

SCNR::Engine::API.run do

Dom {

before :load do |resource, options, browser|

p resource

# => #<SCNR::Engine::Page::DOM:6160 @url="http://testhtml5.vulnweb.com/" @transitions=0 @data_flow_sinks=0 @execution_flow_sinks=0>

p options

# => {:take_snapshot=>true}

p browser

# => #<SCNR::Engine::BrowserPool::Worker pid= job=#<SCNR::Engine::BrowserPool::Jobs::DOMExploration:6140 @resource=#<SCNR::Engine::Page::DOM:6160 @url="http://testhtml5.vulnweb.com/" @transitions=0 @data_flow_sinks=0 @execution_flow_sinks=0> time= timed_out=false> last-url=nil transitions=0>

end

before :event do |locator, event, options, browser|

p locator

# => <li class="active" id="popularLi">

p event

# => :click

p options

# => {}

p browser

# => #<SCNR::Engine::BrowserPool::Worker pid= job=#<SCNR::Engine::BrowserPool::Jobs::DOMExploration::EventTrigger:7760 @resource=#<SCNR::Engine::Page::DOM:7720 @url="http://testhtml5.vulnweb.com/#/popular" @transitions=17 @data_flow_sinks=0 @execution_flow_sinks=0> time= timed_out=false> last-url="http://testhtml5.vulnweb.com/" transitions=17>

end

on :event do |success, locator, event, options, browser|

p success

# => true

p locator

# => <li class="active" id="popularLi">

p event

# => :click

p options

# => {}

p browser

# => #<SCNR::Engine::BrowserPool::Worker pid= job=#<SCNR::Engine::BrowserPool::Jobs::DOMExploration::EventTrigger:7760 @resource=#<SCNR::Engine::Page::DOM:7720 @url="http://testhtml5.vulnweb.com/#/popular" @transitions=17 @data_flow_sinks=0 @execution_flow_sinks=0> time= timed_out=false> last-url="http://testhtml5.vulnweb.com/" transitions=17>

end

after :load do |resource, options, browser|

p resource

# => #<SCNR::Engine::Page::DOM:6160 @url="http://testhtml5.vulnweb.com/" @transitions=0 @data_flow_sinks=0 @execution_flow_sinks=0>

p options

# => {:take_snapshot=>true}

p browser

# => #<SCNR::Engine::BrowserPool::Worker pid= job=#<SCNR::Engine::BrowserPool::Jobs::DOMExploration:6140 @resource=#<SCNR::Engine::Page::DOM:6160 @url="http://testhtml5.vulnweb.com/" @transitions=0 @data_flow_sinks=0 @execution_flow_sinks=0> time= timed_out=false> last-url="http://testhtml5.vulnweb.com/" transitions=17>

end

after :event do |transition, locator, event, options, browser|

p transition

# => #<SCNR::Engine::Page::DOM::Transition:0x00007f50fc0739c0 @options={}, @event=:click, @element=<a data-scnr-engine-id="1270713017" href="#/popular">, @clock=nil, @time=0.036003384>

p locator

# => <a data-scnr-engine-id="1270713017" href="#/popular">

p event

# => :click

p options

# => {}

p browser

# => #<SCNR::Engine::BrowserPool::Worker pid= job=#<SCNR::Engine::BrowserPool::Jobs::DOMExploration::EventTrigger:7680 @resource=#<SCNR::Engine::Page::DOM:7620 @url="http://testhtml5.vulnweb.com/#/popular" @transitions=17 @data_flow_sinks=0 @execution_flow_sinks=0> time= timed_out=false> last-url="http://testhtml5.vulnweb.com/" transitions=17>

end

}

end

Example

bin/spectre http://html5.vulnweb.com/ --checks=- --script=dom.rb

Http

SCNR::Engine::API.run do

Http {

on :request do |request|

p request

# => #<SCNR::Engine::HTTP::Request @id= @mode=async @method=get @url="https://wordpress.com/" @parameters={} @high_priority= @performer=#<SCNR::Engine::Framework (scanning) runtime=0.805773919 found-pages=0 audited-pages=0 issues=0 checks= plugins=autothrottle,healthmap,discovery,timing_attacks,uniformity>>

end

on :response do |response|

p response

# => #<SCNR::Engine::HTTP::Response:0x00007fd6e75923b8 ..>

end

on :cookies do |cookies|

p cookies

# => [#<SCNR::Engine::Element::Cookie (get) url="https://wordpress.com/start/?ref=logged-out-homepage-lp" action="https://wordpress.com/start/?ref=logged-out-homepage-lp" default-inputs={"country_code"=>"GR"} inputs={"country_code"=>"GR"} raw_inputs=[] >]

end

# Block to run after each HTTP request batch run.

after :run do

end

}

end

Example

bin/spectre https://wordpress.com --checks=- --script=http.rb

Input

SCNR::Engine::API.run do

Input {

# Fill-in values for the given element; must return Hash not alter the element.

values do |element|

p element

# => #<SCNR::Engine::Element::Form (post) auditor=SCNR::Engine::Trainer::SinkTracer url="http://testhtml5.vulnweb.com/" action="http://testhtml5.vulnweb.com/login" default-inputs={"username"=>"admin", "password"=>"", "loginFormSubmit"=>""} inputs={"username"=>"admin", "password"=>"5543!%scnr_engine_secret", "loginFormSubmit"=>"1"} raw_inputs=[] >

element.inputs

end

}

end

Example

bin/spectre https://testhtml5.vulnweb.com --checks=xss --script=input.rb

Logging

SCNR::Engine::API.run do

Logging {

# Will get called for each error message that is logged.

on :error do |error|

p error

# => "Error string"

end

# Will get called for each exception that is created, even if safely handled.

on :exception do |exception|

p exception

# => #<SCNR::Engine::URICommon::Error: Failed to parse URL.>

end

}

end

Example

bin/spectre https://example.com --checks=- --script=logging.rb

Checks

SCNR::Engine::API.run do

Checks {

# Will get called for each check that is run.

on :run do |check|

p check

# => #<SCNR::Engine::Checks::BackupDirectories:0x00007f2d55c66bf8 @page=#<SCNR::Engine::Page:7920 @url="http://testhtml5.vulnweb.com/" @dom=#<SCNR::Engine::Page::DOM:7940 @url="http://testhtml5.vulnweb.com/" @transitions=0 @data_flow_sinks=0 @execution_flow_sinks=0>>>

end

# This will run from the context of SCNR::Engine::Check::Base; it

# basically creates a new check component on the fly.

#

# This one does something really simple, logs an issue for each 404 page.

as :not_found,

issue: {

name: 'Page not found',

severity: SCNR::Engine::Issue::Severity::INFORMATIONAL

} do

response = page.response

next if response.code != 404

log(

proof: response.status_line,

vector: SCNR::Engine::Element::Server.new( response.url ),

response: response

)

end

}

end

Example

bin/spectre http://testhtml5.vulnweb.com --script=checks.rb

Plugins

SCNR::Engine::API.run do

Plugins {

# Will get called upon plugin class initialization.

on :initialize do |plugin|

p plugin

# => #<SCNR::Engine::Plugins::AutoThrottle:0x00007f8896ccacd8 @options={}>

end

# Will get called when each plugin's #prepare method is called.

on :prepare do |plugin|

end

# Will get called when each plugin's #run method is called.

on :run do |plugin|

end

# Will get called when each plugin's #clean_up method is called.

on :clean_up do |plugin|

end

# Will get called when each plugin is done running.

on :done do |plugin|

end

# This will run from the context of SCNR::Engine::Plugin::Base; it

# basically creates a new plugin component on the fly.

as :my_plugin do

# Do stuff then wait until scan completes.

wait_while_framework_running

# Do stuff after scan completes.

end

}

end

Example

bin/spectre http://testhtml5.vulnweb.com --checks=- --script=plugins.rb

Fingerprinters

SCNR::Engine::API.run do

Fingerprinters {

# Identify `*.x` resources as PHP.

as :x_as_php do

next unless extension == 'x'

platforms << :php

end

}

end

Example

bin/spectre http://testhtml5.vulnweb.com --checks=- --script=fingerprinters.rb

Scan

Encapsulates functionality that has to do with the scan.

SCNR::Engine::API.run do

Scan {

Options {}

Scope {}

Session {}

# Called before each page audit.

before :page do |page|

end

# Called on page audit.

on :page do |page|

end

# Called after a page audit.

after :page do |page|

end

# Perform the scan.

run! do |report, statistics|

end

# Perform the scan.

report, statistics = self.run

# Get scan progress.

progress = self.progress

# Get scan progress updates for session :my_session (any user-provided session ID will do).

progress = self.session_progress( :my_session )

sitemap = self.sitemap

status = self.status

issues = self.issues

statistics = self.statistics

is_running = self.running?

is_scanning = self.scanning?

# Pauses the scan.

self.pause!

# Resumes the scan.

self.resume!

# Aborts the scan.

self.abort!

# Suspends the scan.

self.suspend!

is_pausing = self.pausing?

is_paused = self.paused?

is_suspending = self.suspending?

is_suspended = self.suspended?

# Restores a scan.

self.restore!( snapshot_path )

# Get a scan report.

report = self.generate_report

}

end

Example

SCNR::UI::CLI::Output.mute

api = SCNR::Engine::API.new

api.scan.options.set url: 'http://testhtml5.vulnweb.com',

checks: %w(allowed_methods interesting_responses)

api.state.on :change do |state|

puts "Status:"

ap state.status

end

api.data.sitemap.on :new do |entry|

puts "Sitemap entry:"

ap entry

end

api.data.issues.on :new do |issue|

puts "New issue:"

ap issue

end

scan_thread = Thread.new { api.scan.run }

while scan_thread.alive?

puts "Progress update:"

ap api.scan.session_progress( :session )

sleep 1

end

ap api.scan.generate_report

Assuming the above is saved as html5.scanner.rb:

bin/spectre_script html5.scanner.rb

Options

SCNR::Engine::API.run do

Scan {

Options {

# Sets options.

set({

url: 'http://testhtml5.vulnweb.com',

audit: {

parameter_values: true,

paranoia: :medium,

exclude_vector_patterns: [],

include_vector_patterns: [],

link_templates: []

},

device: {

visible: false,

width: 1600,

height: 1200,

user_agent: "Mozilla/5.0 (Gecko) SCNR::Engine/v1.0dev",

pixel_ratio: 1.0,

touch: false

},

dom: {

engine: :chrome,

local_storage: {},

session_storage: {},

wait_for_elements: {},

pool_size: 4,

job_timeout: 60,

worker_time_to_live: 250,

wait_for_timers: false

},

http: {

request_timeout: 20000,

request_redirect_limit: 5,

request_concurrency: 10,

request_queue_size: 50,

request_headers: {},

response_max_size: 500000,

cookies: {},

authentication_type: "auto"

},

input: {

values: {},

default_values: {

"name" => "scnr_engine_name",

"user" => "scnr_engine_user",

"usr" => "scnr_engine_user",

"pass" => "5543!%scnr_engine_secret",

"txt" => "scnr_engine_text",

"num" => "132",

"amount" => "100",

"mail" => "[email protected]",

"account" => "12",

"id" => "1"

},

without_defaults: false,

force: false

},

scope: {

directory_depth_limit: 10,

auto_redundant_paths: 15,

redundant_path_patterns: {},

dom_depth_limit: 4,

dom_event_limit: 500,

dom_event_inheritance_limit: 500,

exclude_file_extensions: [],

exclude_path_patterns: [],

exclude_content_patterns: [],

include_path_patterns: [],

restrict_paths: [],

extend_paths: [],

url_rewrites: {}

},

session: {},

checks: [

"*"

],

platforms: [],

plugins: {},

no_fingerprinting: false,

authorized_by: nil

})

}

}

end

Scope

Determines which resources are in or out of scope. All return values will be cast to boolean.

SCNR::Engine::API.run do

Scan {

Scope {

select :url do |url|

end

select :page do |page|

end

select :element do |element|

end

select :event do |locator, event, options, browser|

end

reject :url do |url|

end

reject :page do |page|

end

reject :element do |element|

end

reject :event do |locator, event, options, browser|

end

}

}

end

Example

bin/spectre http://testhtml5.vulnweb.com --checks=- --script=scope.rb

Session

SCNR::Engine::API.run do

Scan {

Session {

to :login do |browser|

# Login with whichever interface you prefer.

watir = browser.watir

selenium = browser.selenium

watir.goto SCNR::Engine::Options.url

watir.link( href: '#myModal' ).click

form = watir.form( id: 'loginForm' )

form.text_field( name: 'username' ).set 'admin'

form.text_field( name: 'password' ).set 'admin'

form.submit

end

to :check do |async|

http_client = SCNR::Engine::HTTP::Client

check = proc { |r| r.body.optimized_include? '<b>admin' }

# If an async block is passed, then the framework would rather

# schedule it to run asynchronously.

if async